- View All

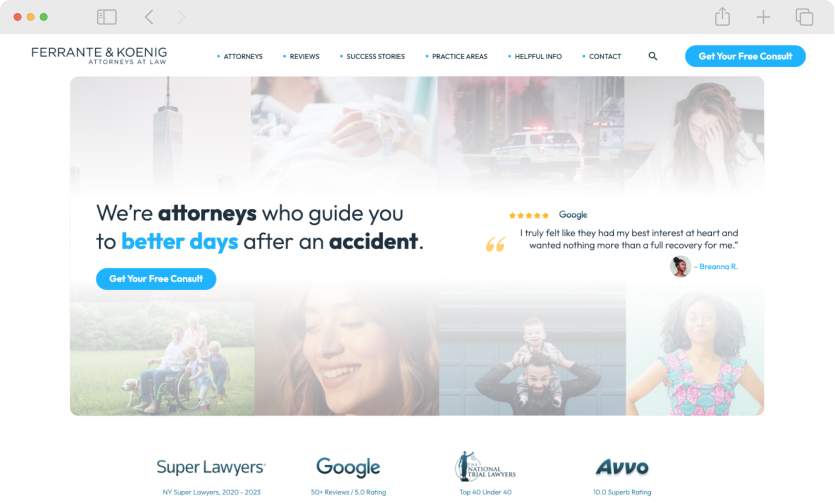

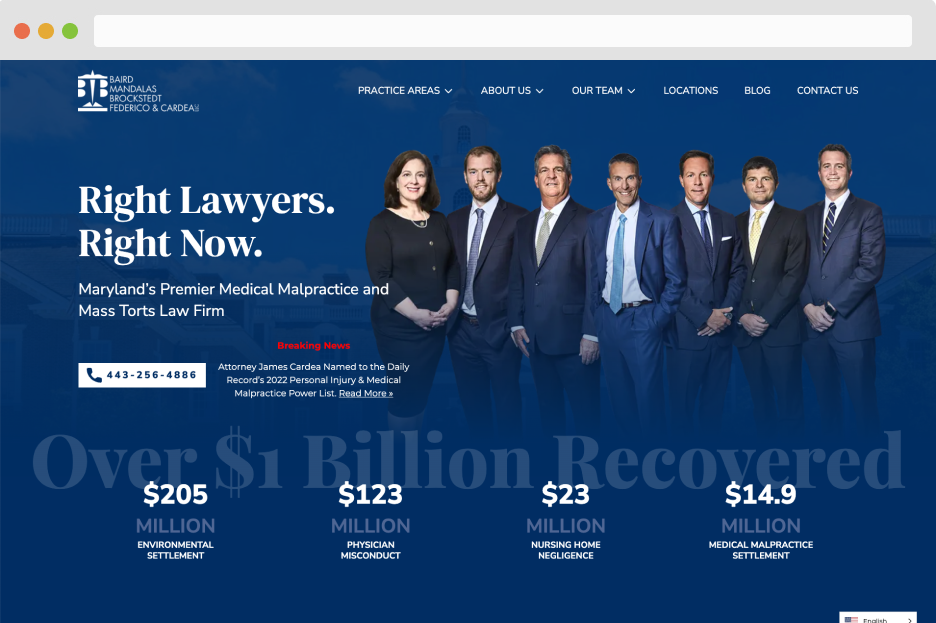

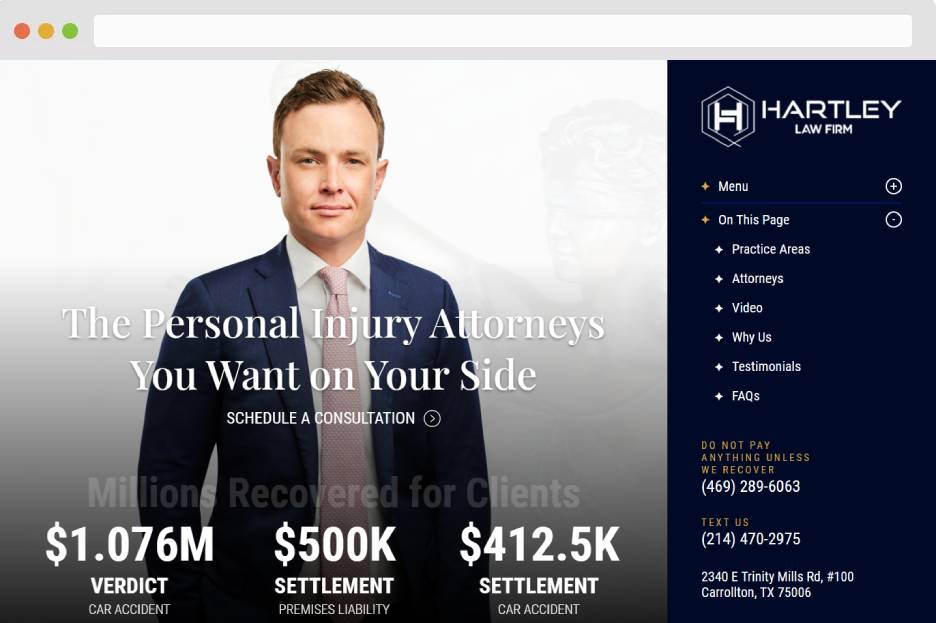

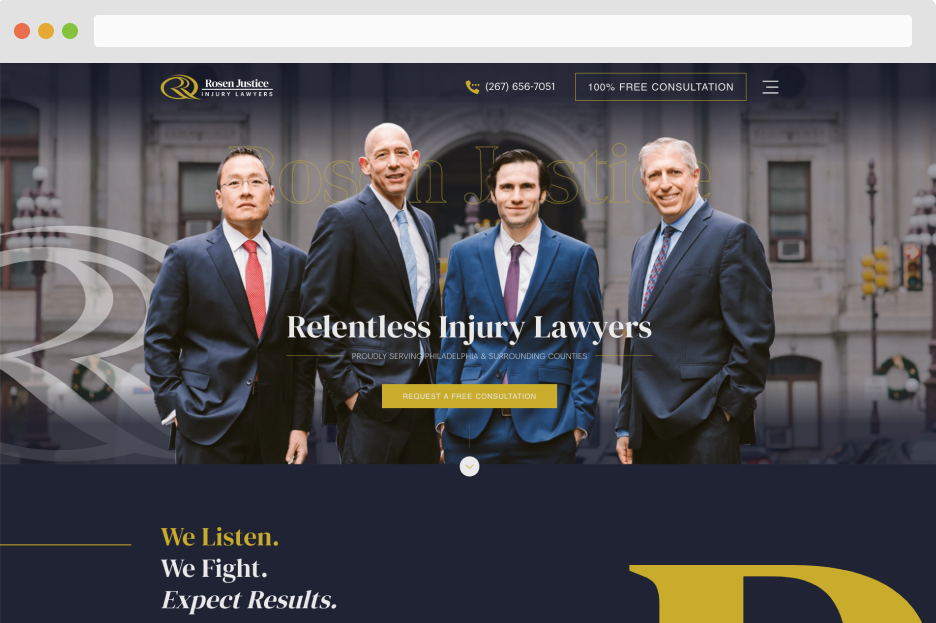

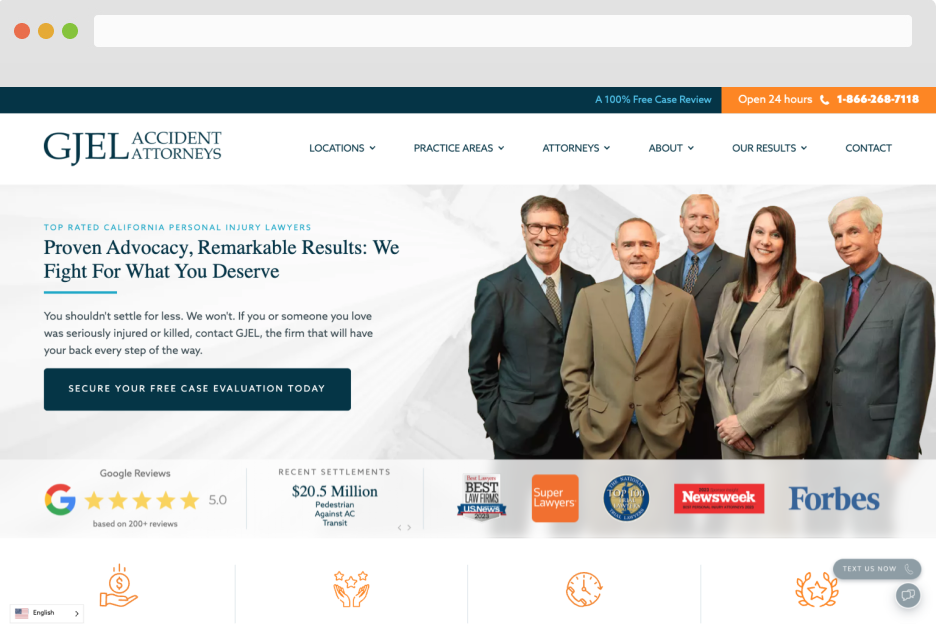

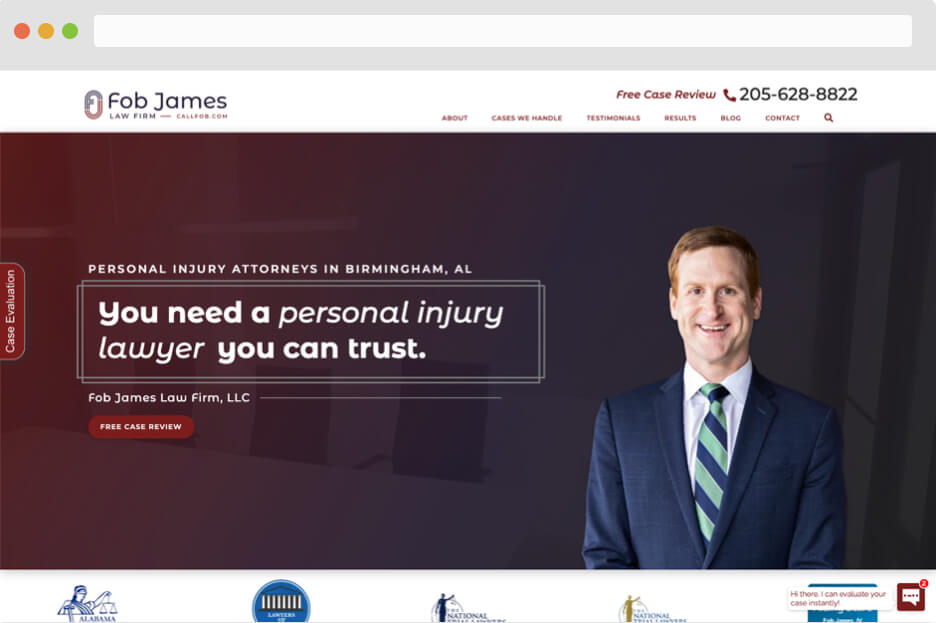

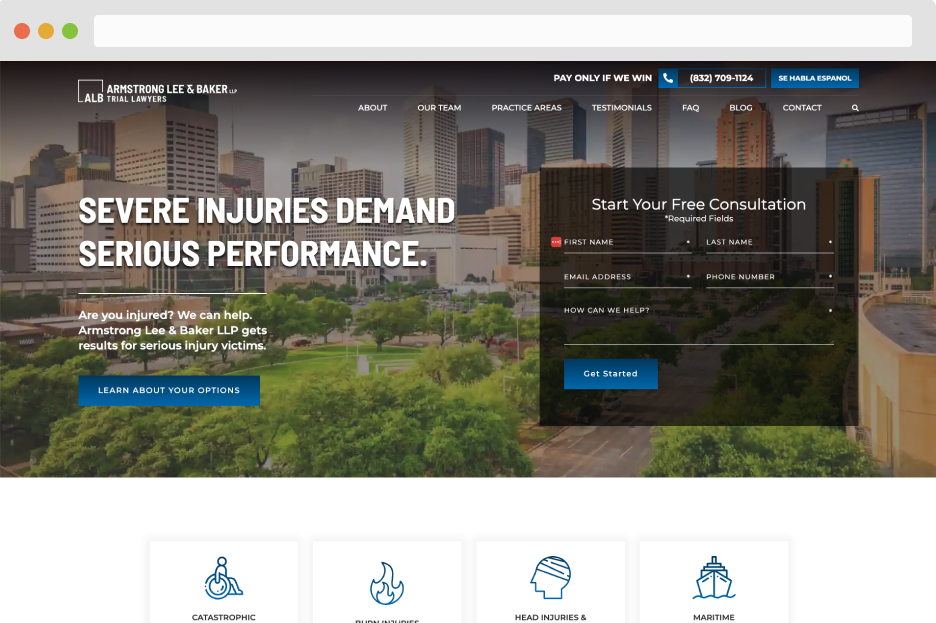

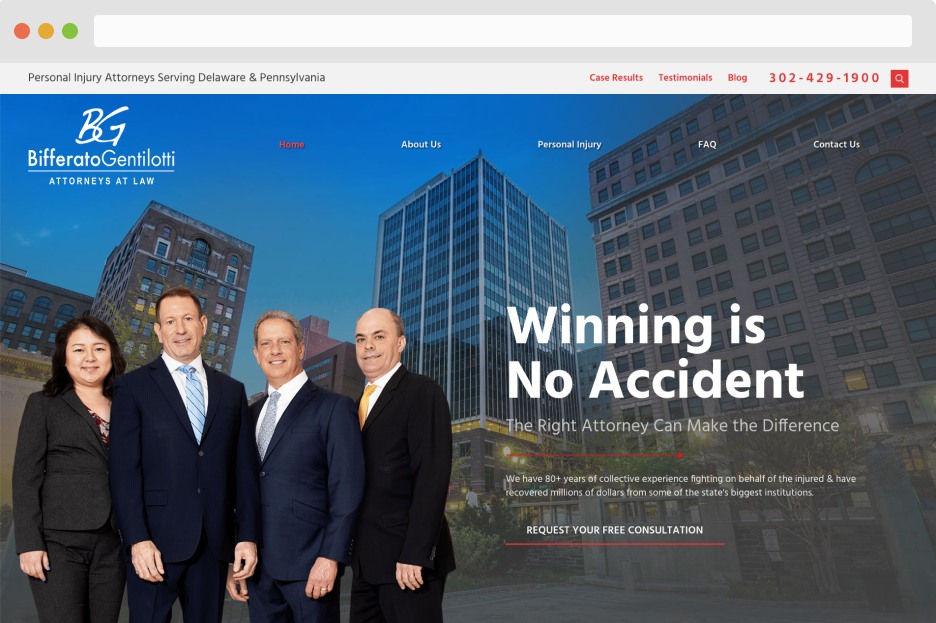

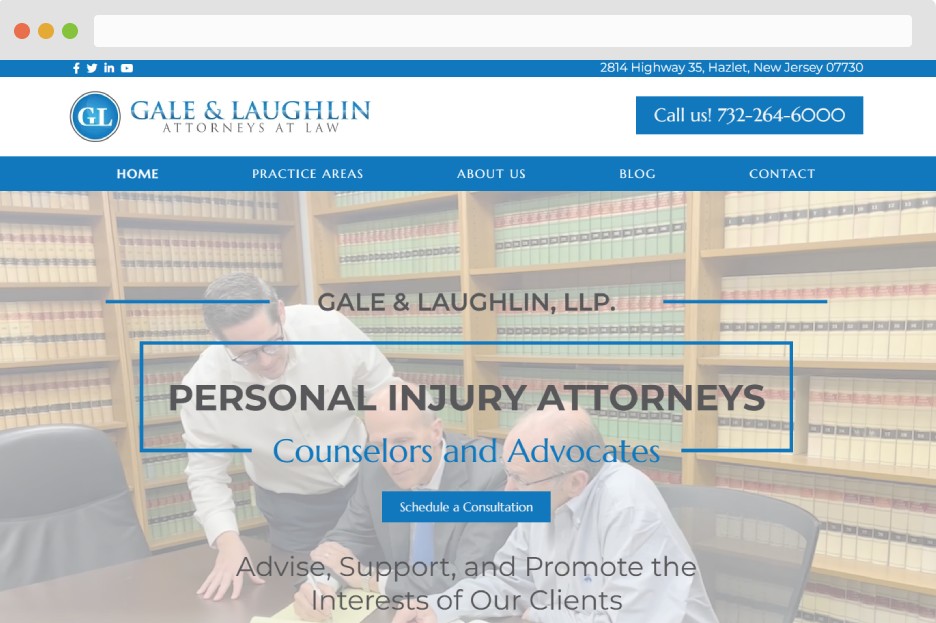

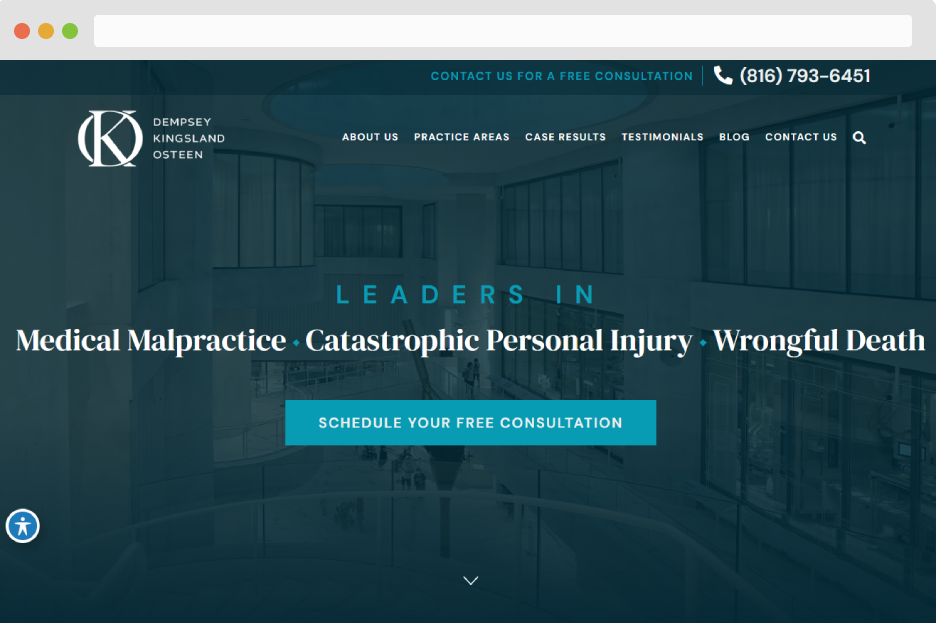

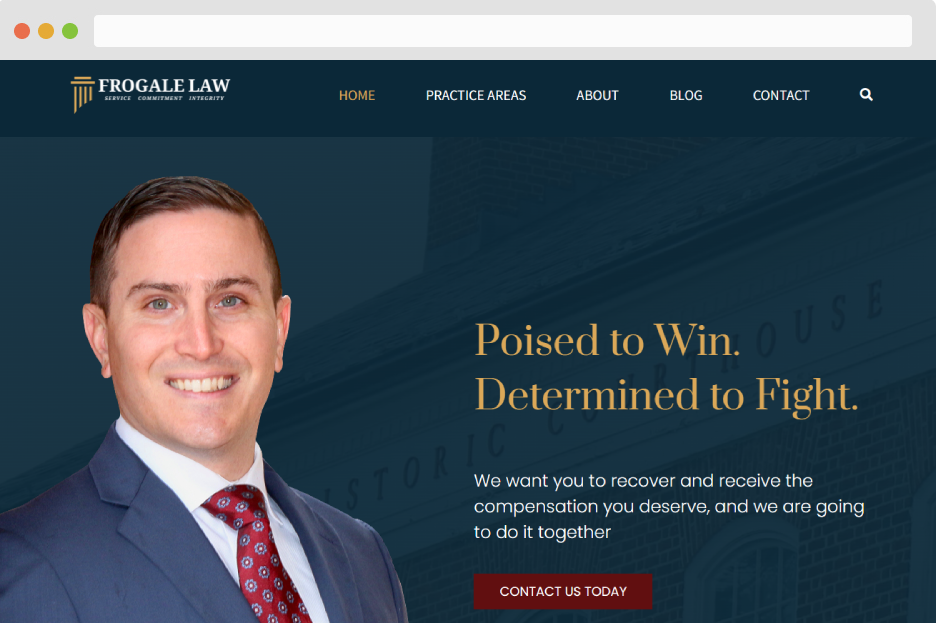

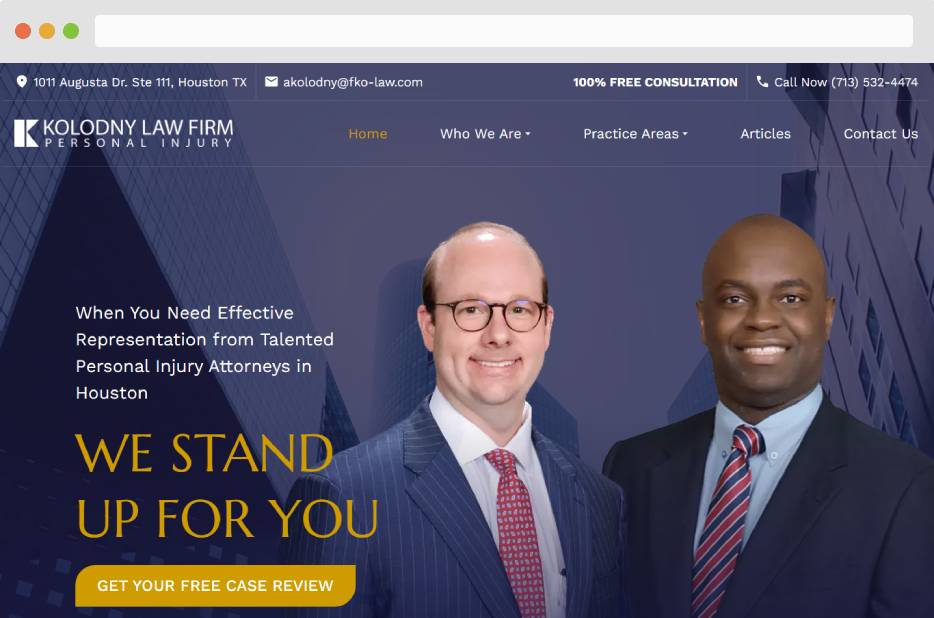

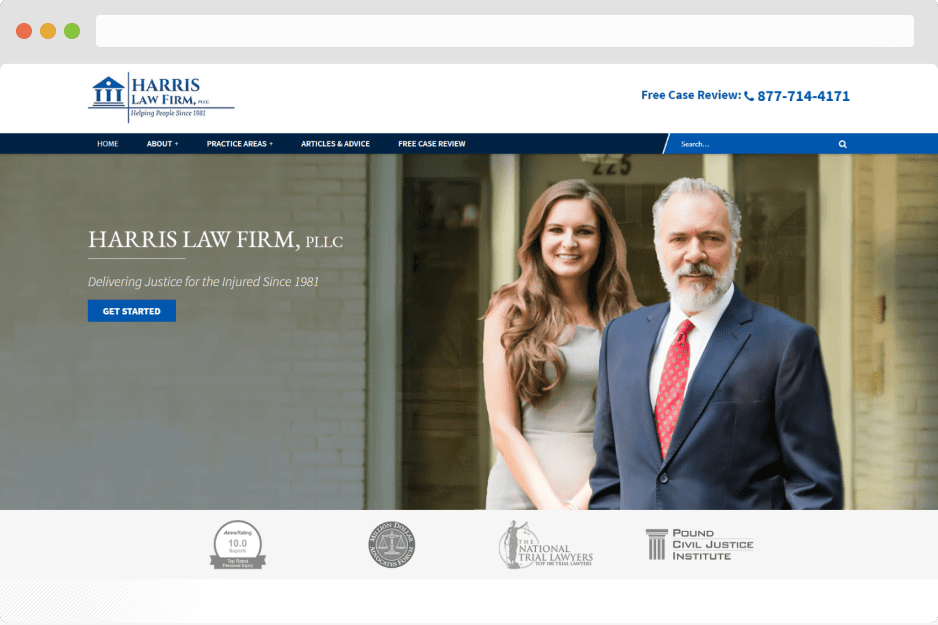

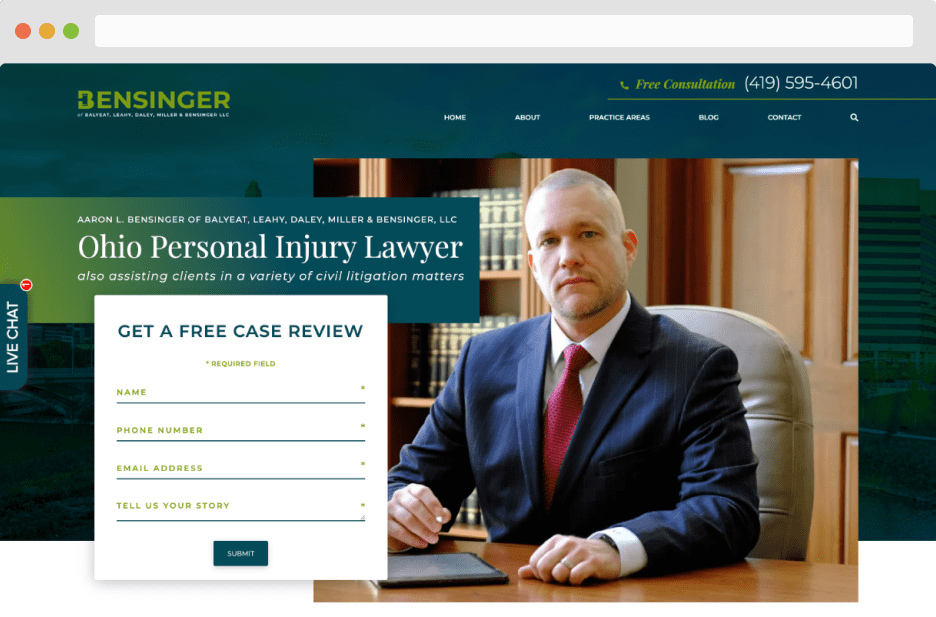

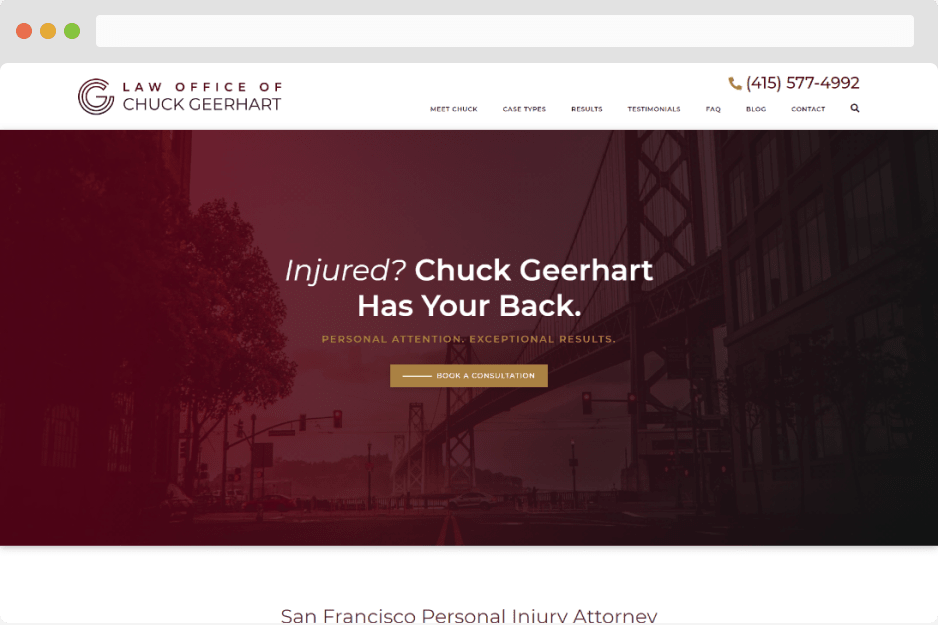

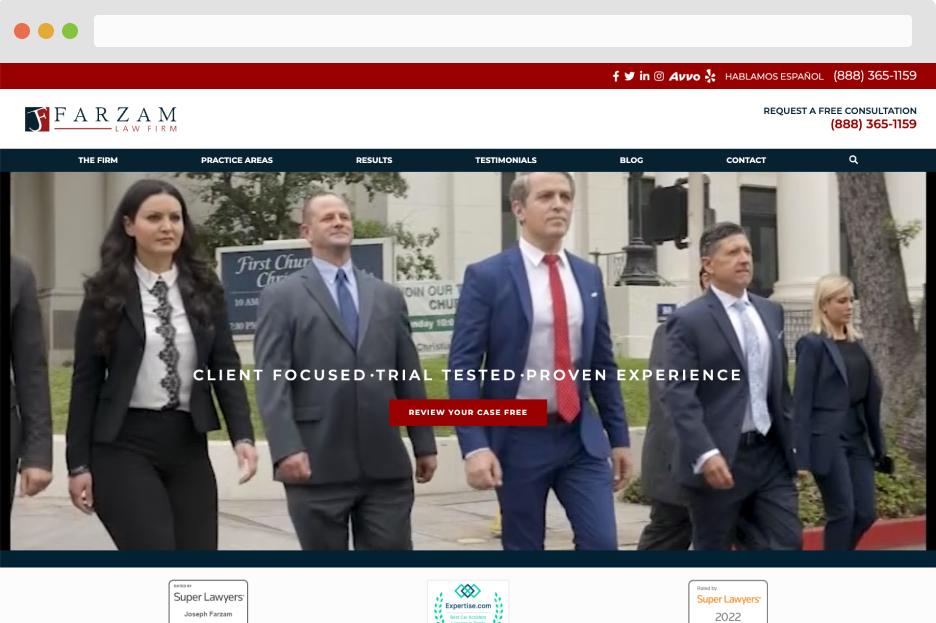

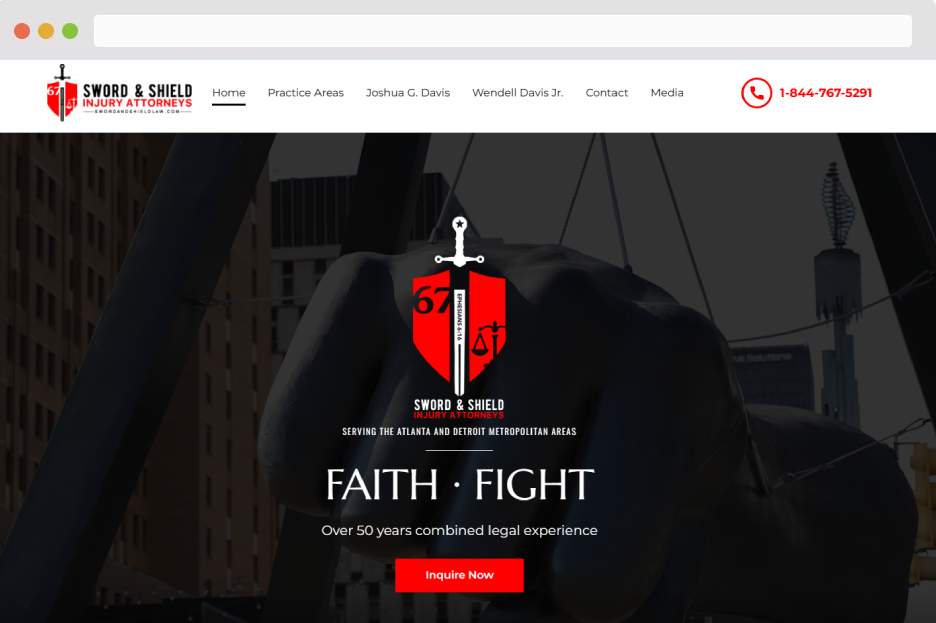

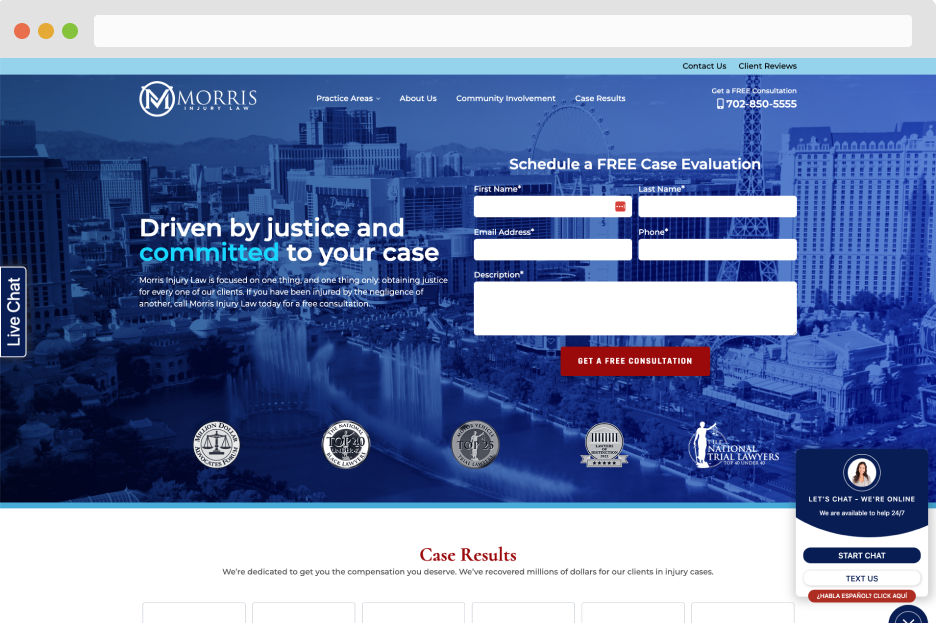

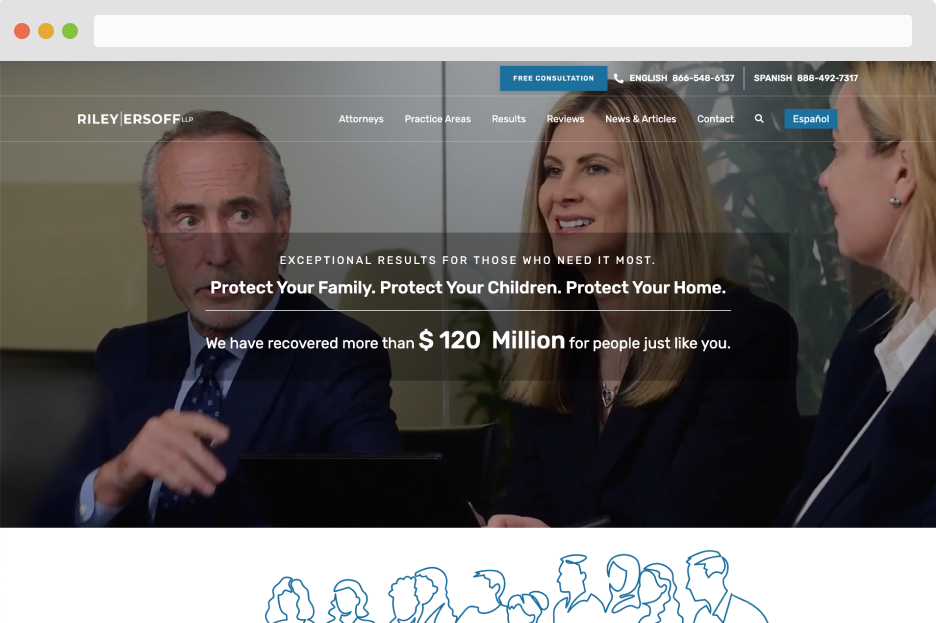

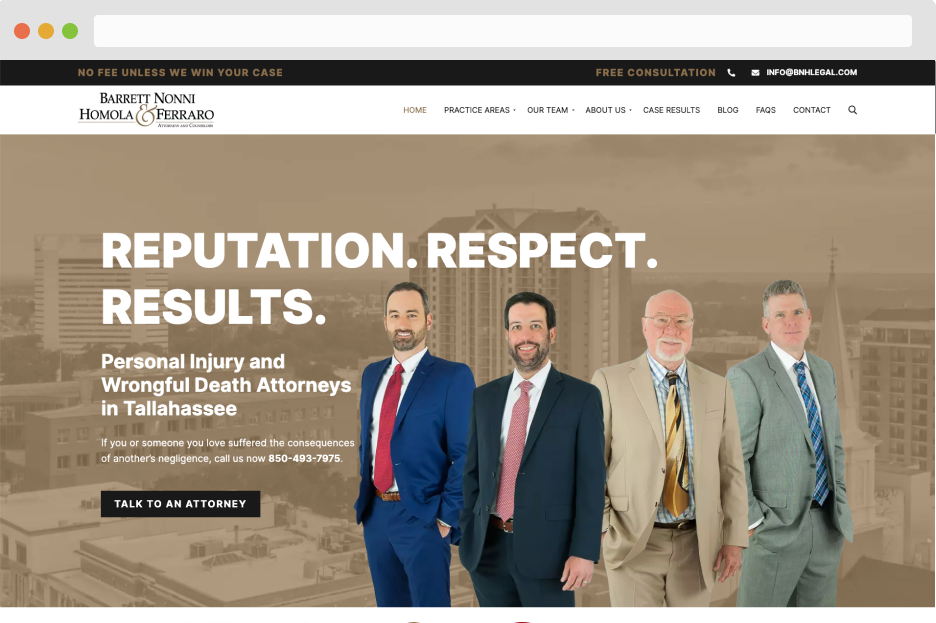

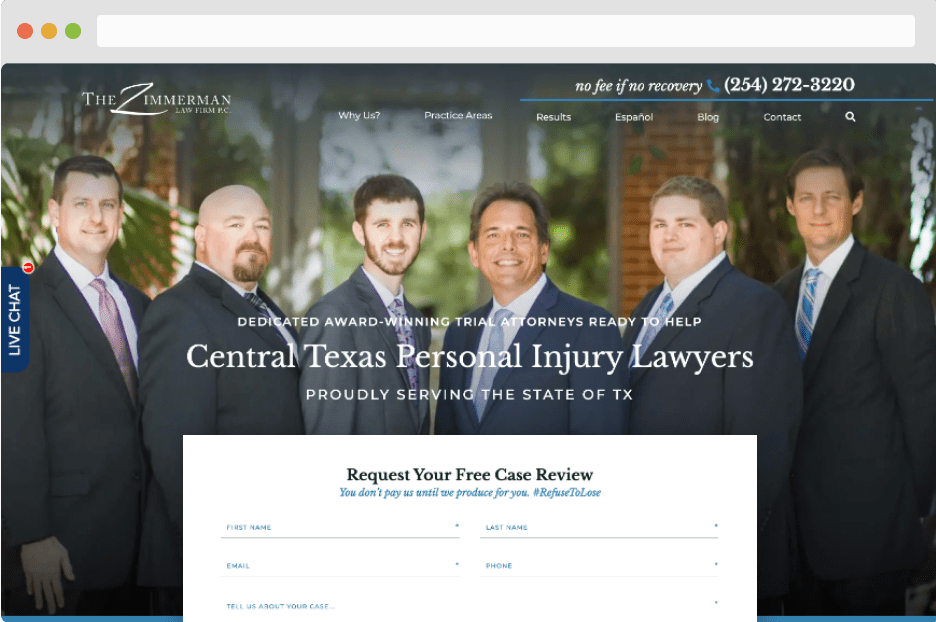

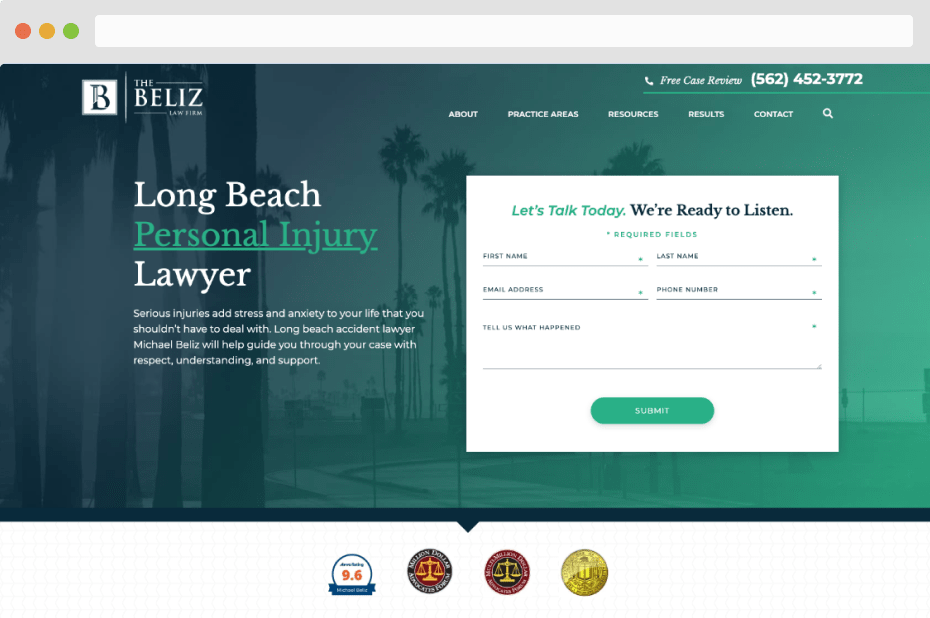

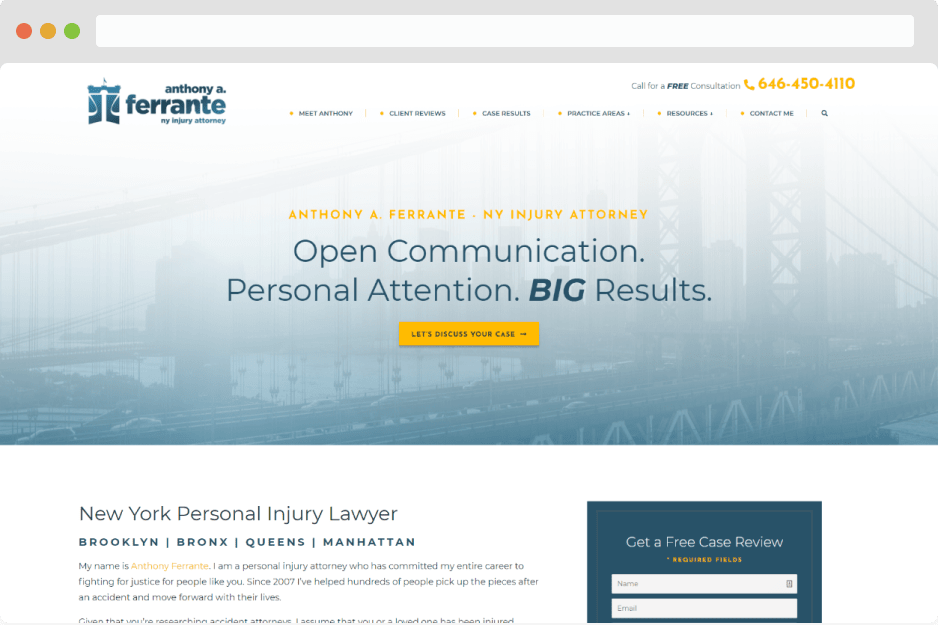

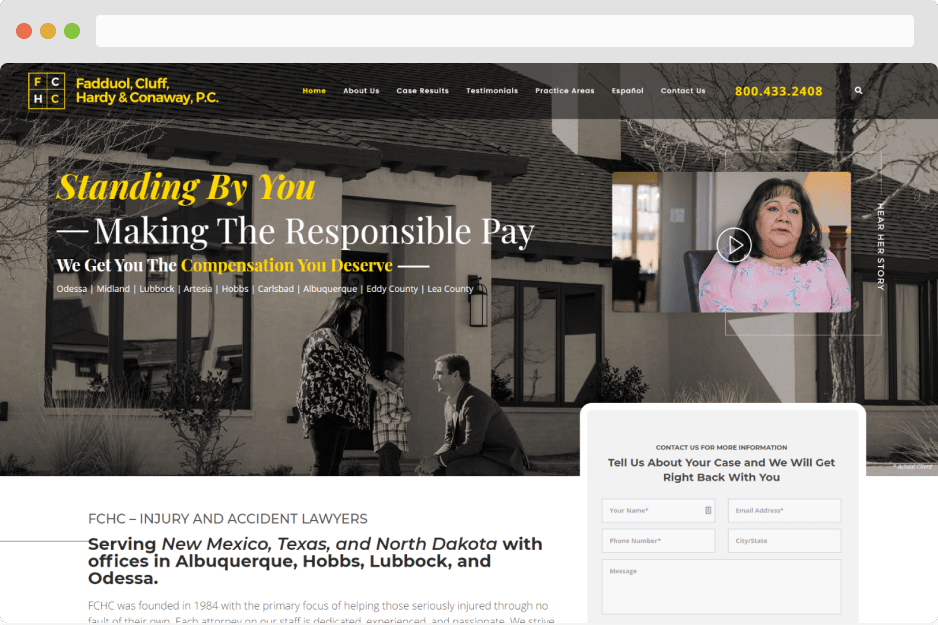

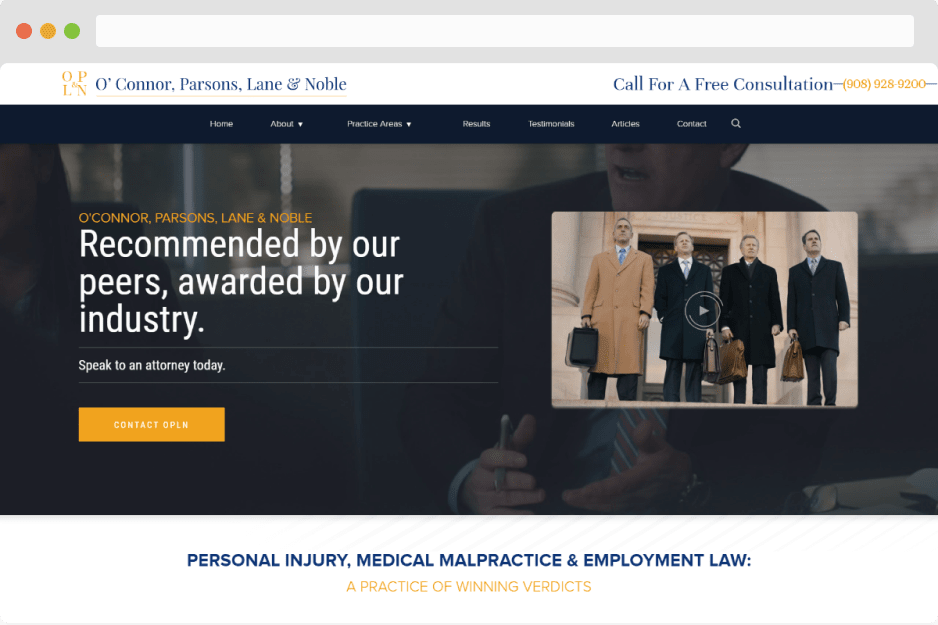

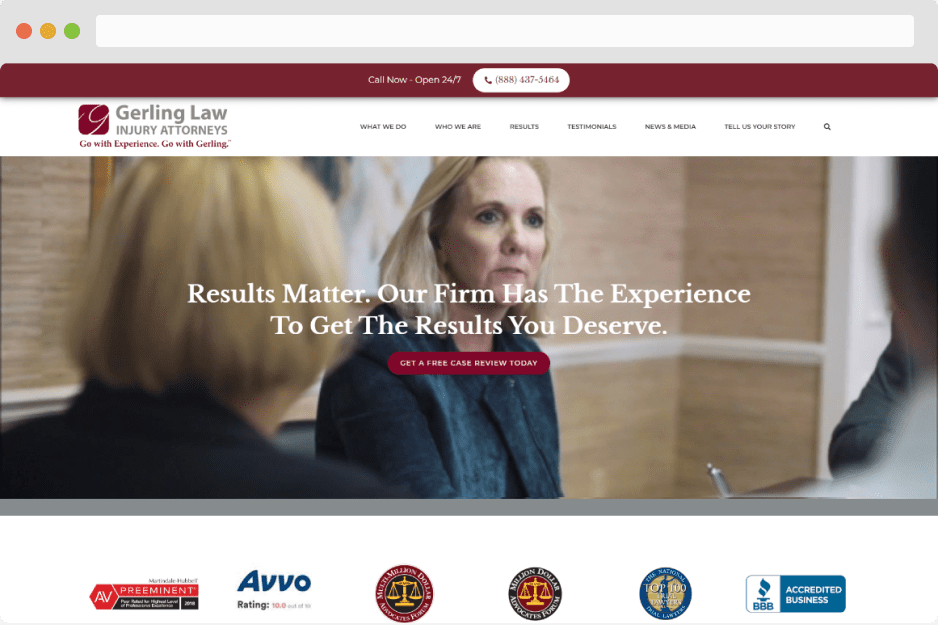

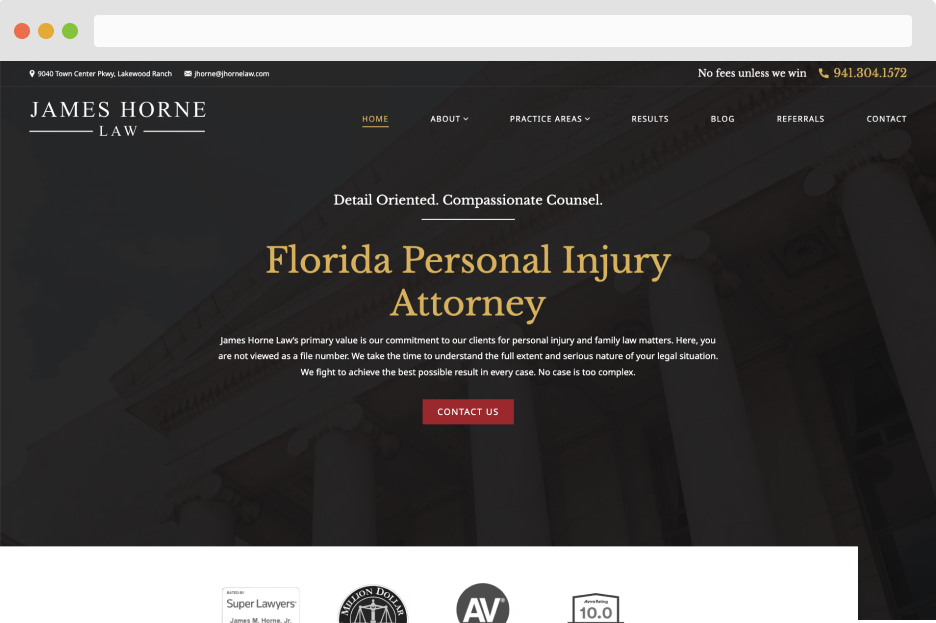

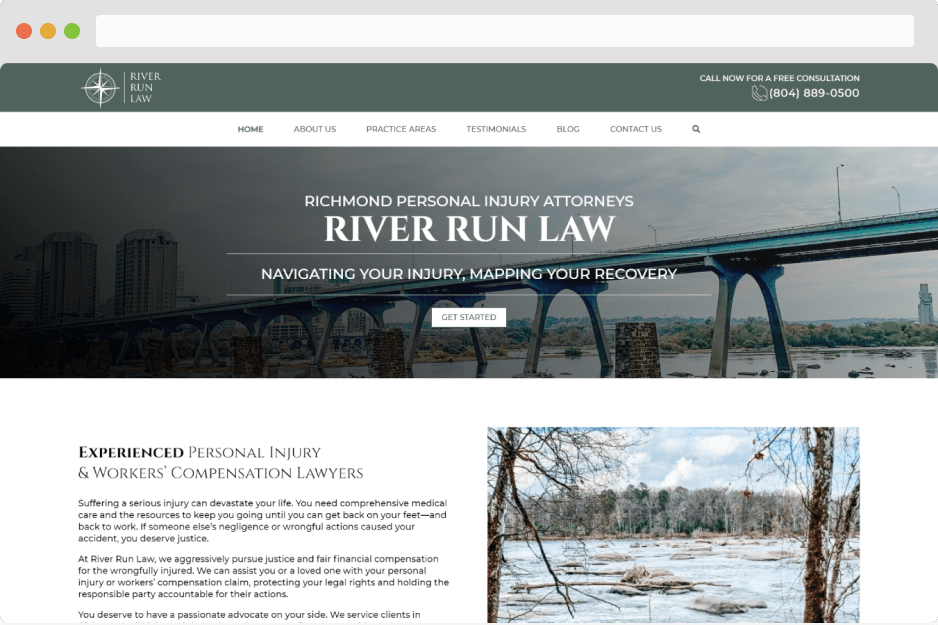

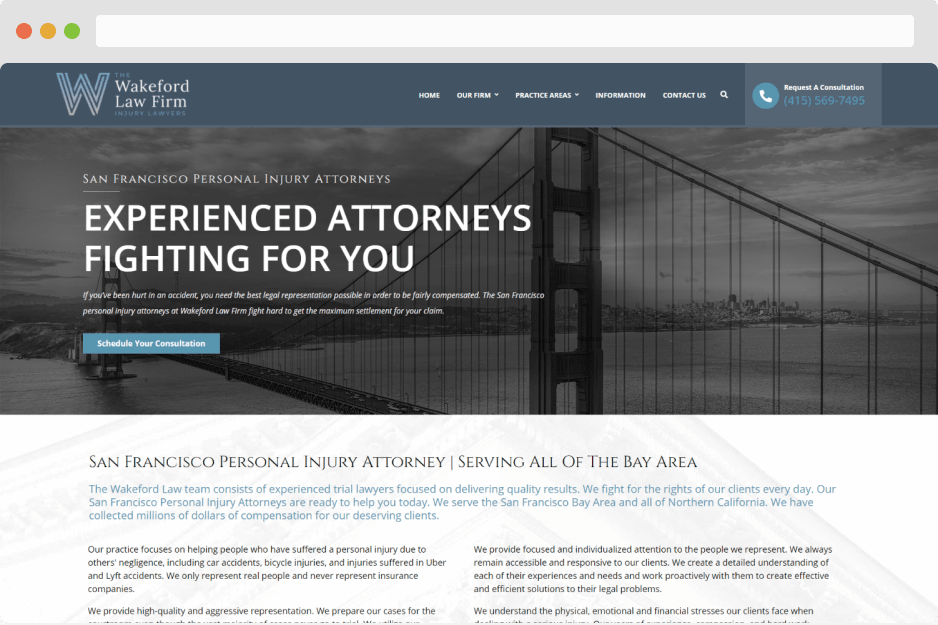

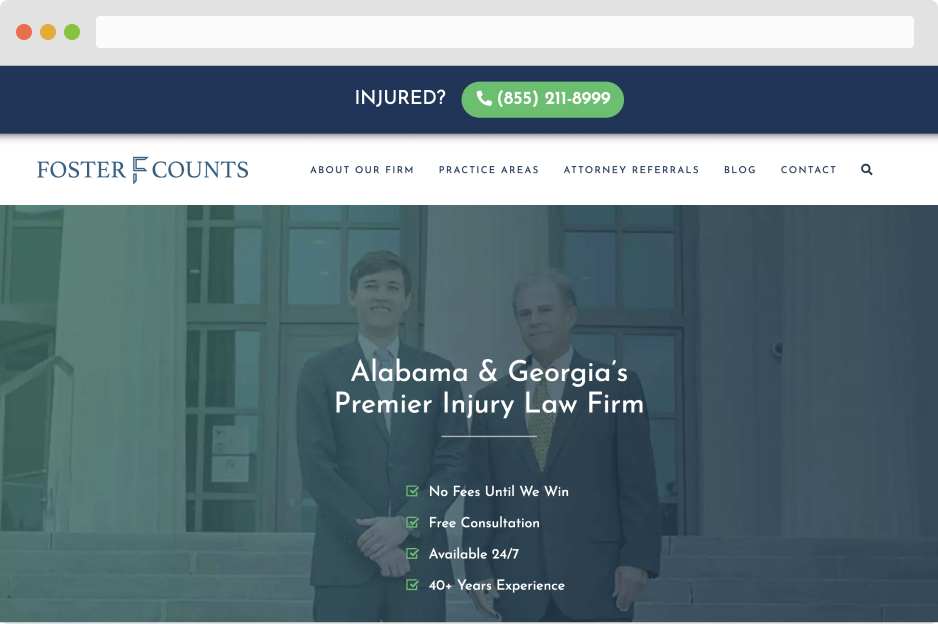

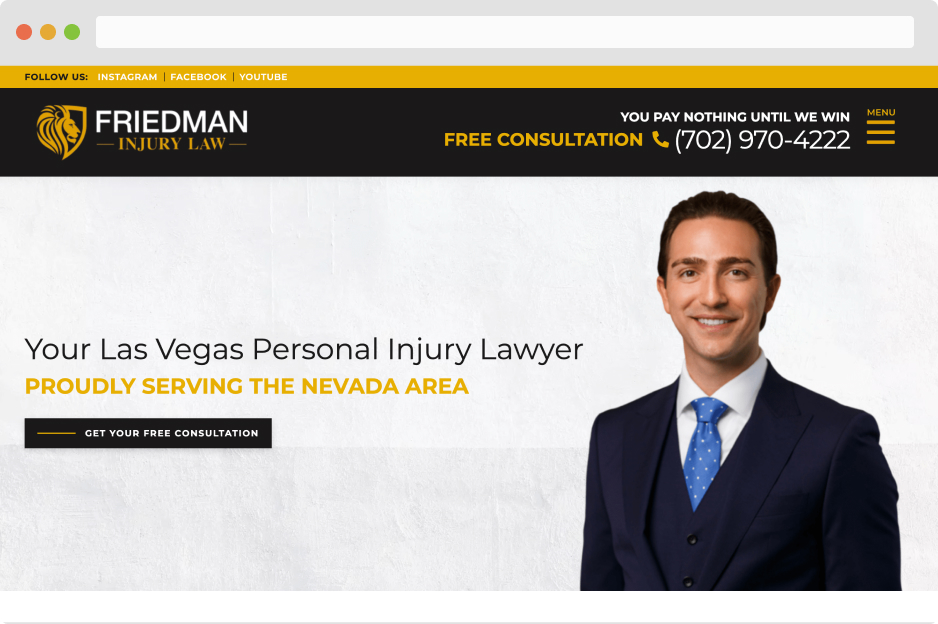

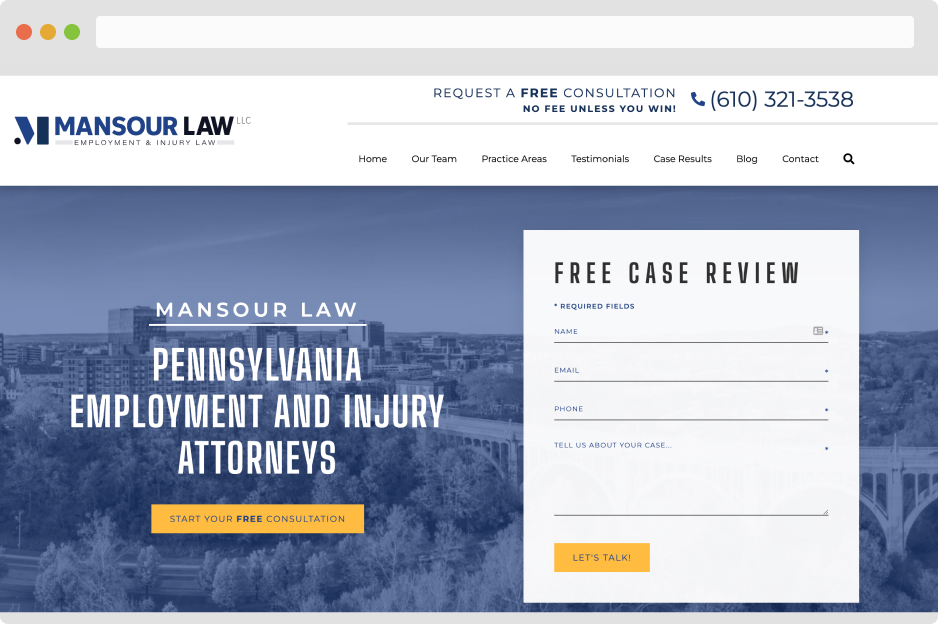

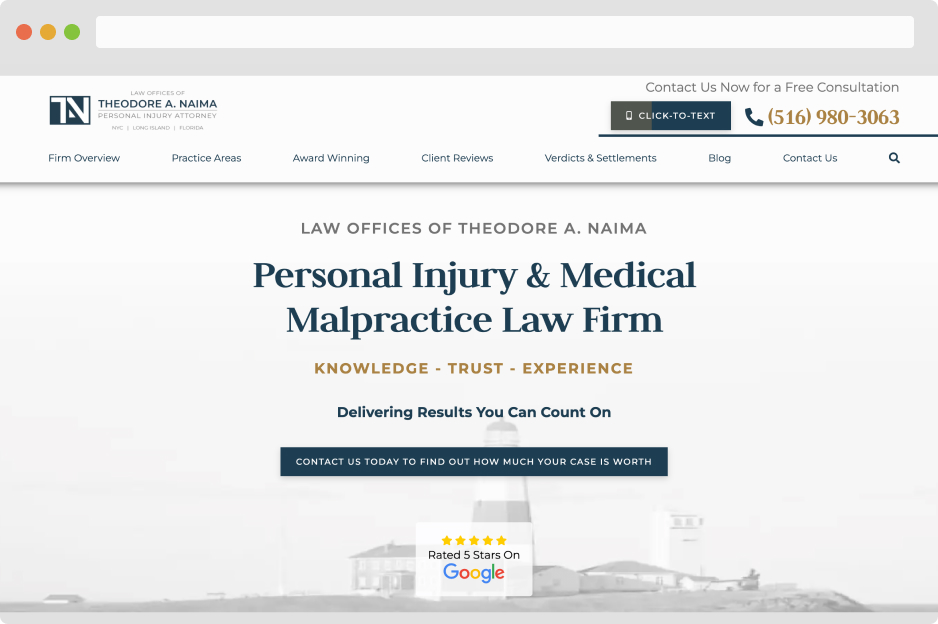

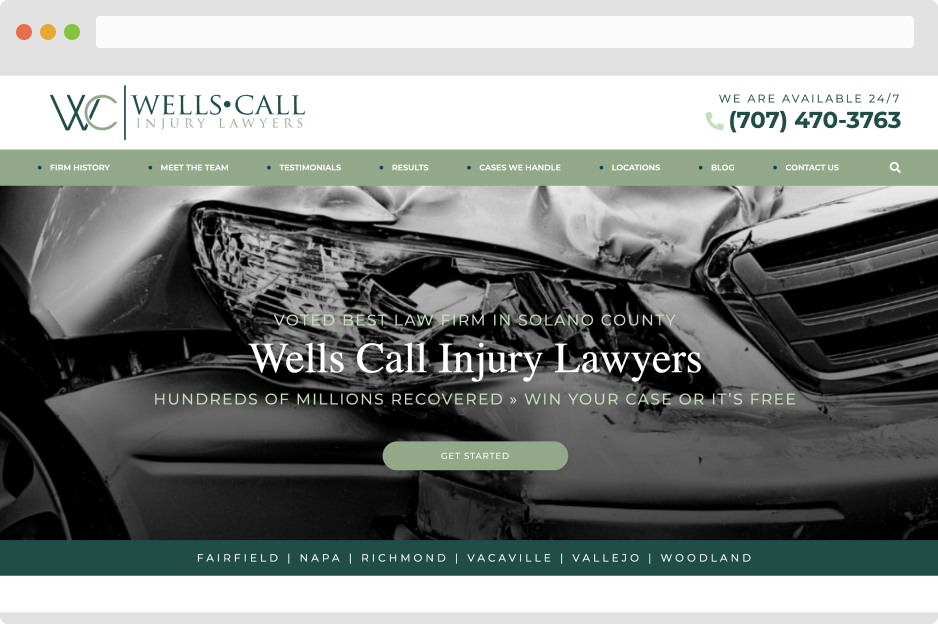

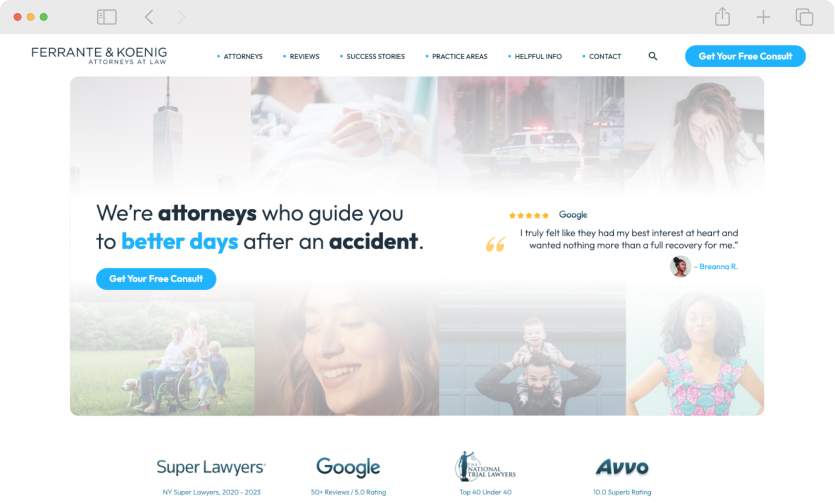

- Personal Injury

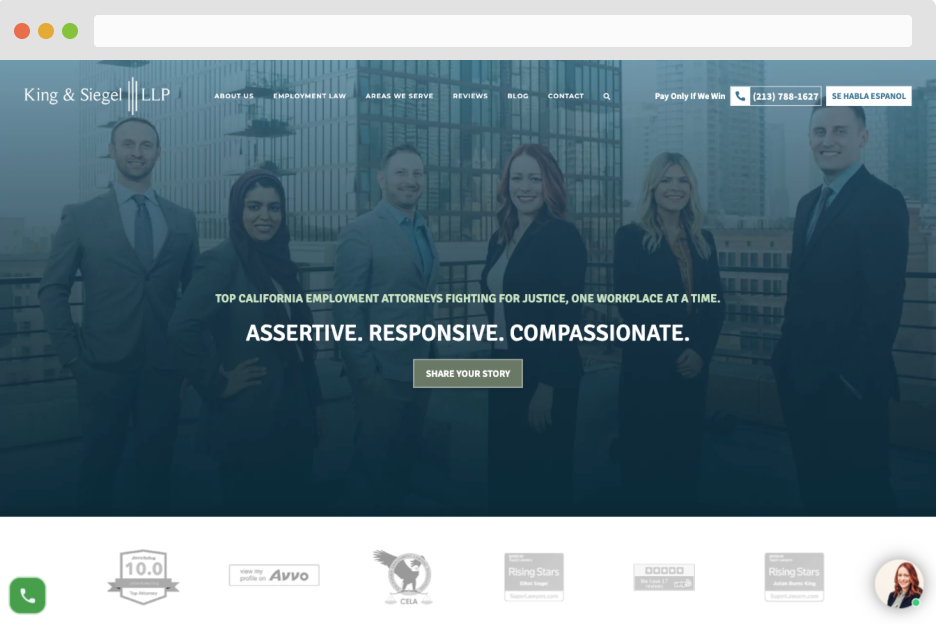

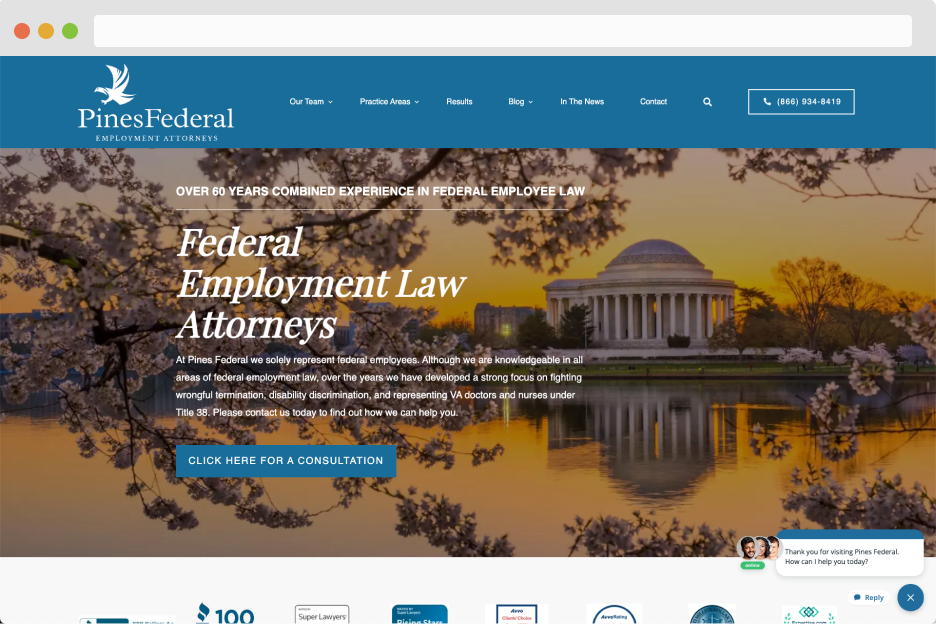

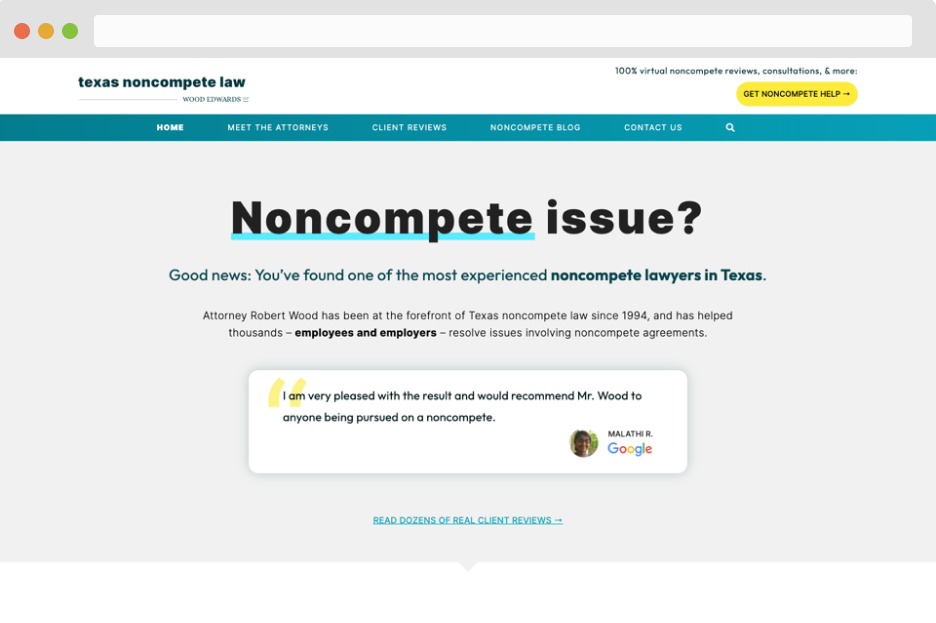

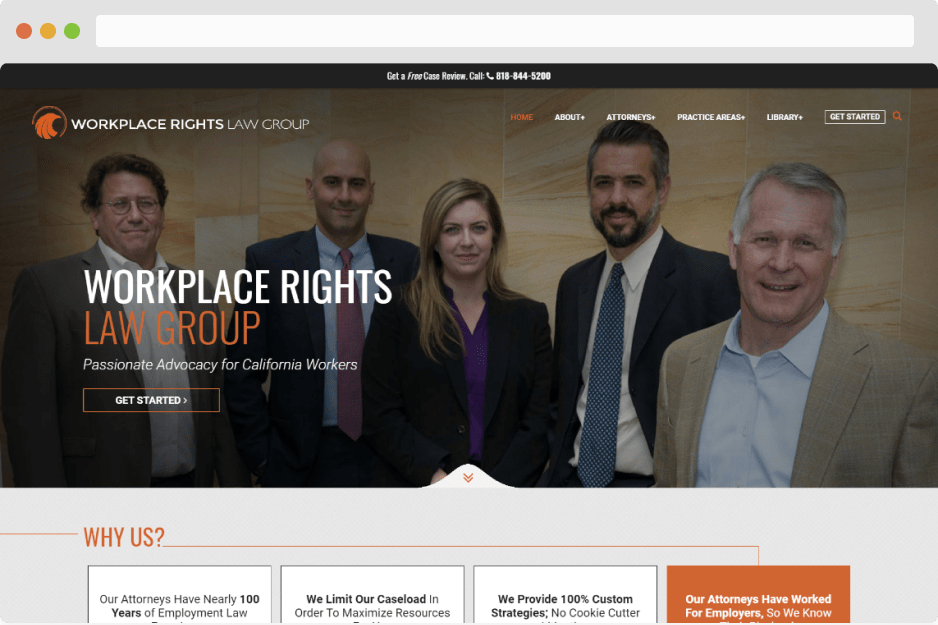

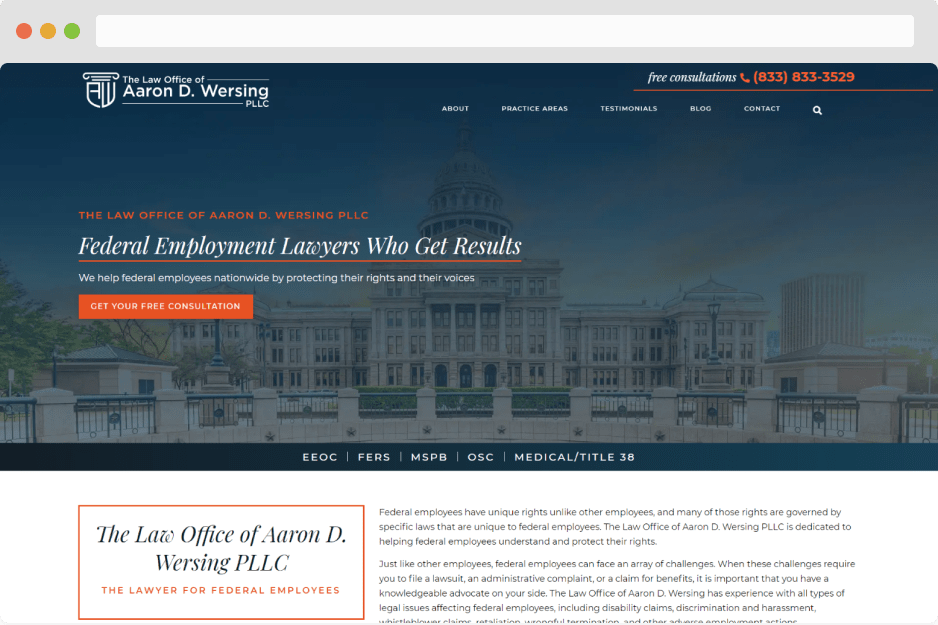

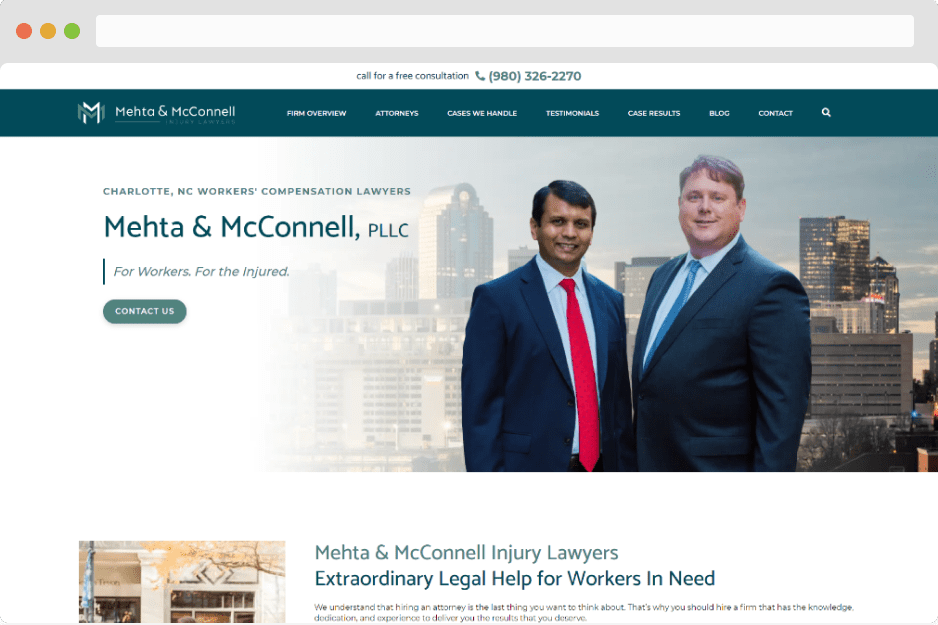

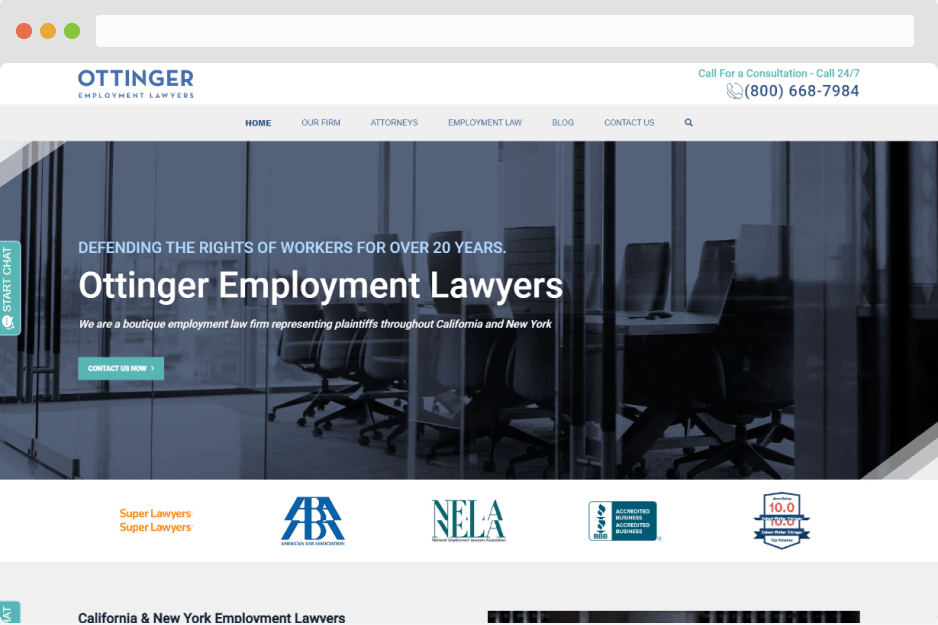

- Employment Law

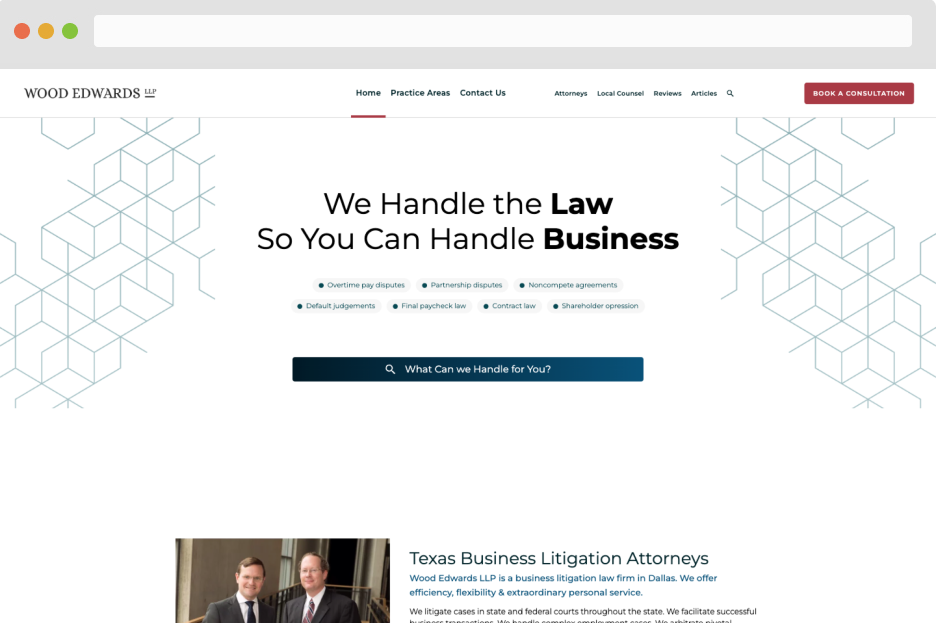

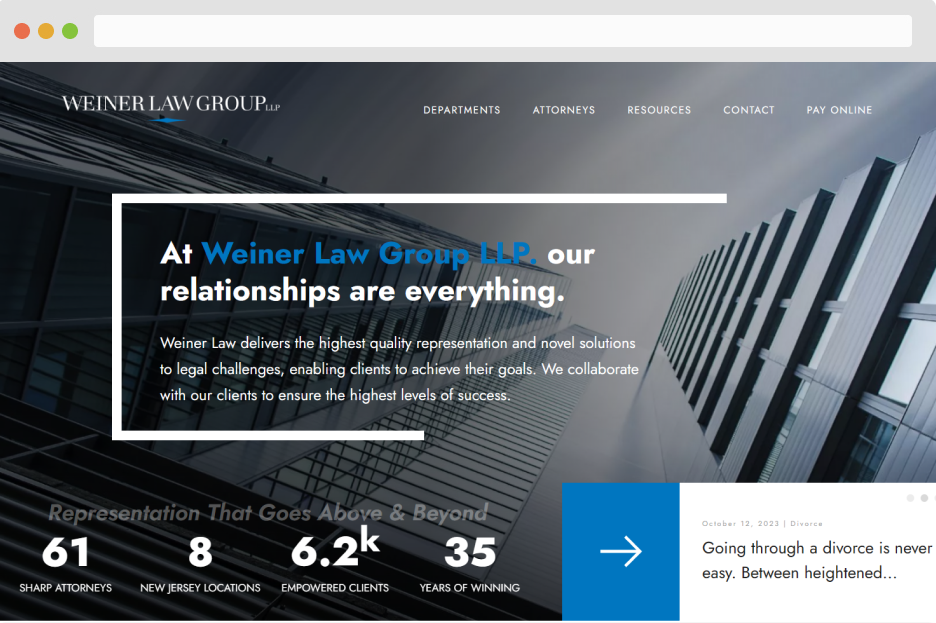

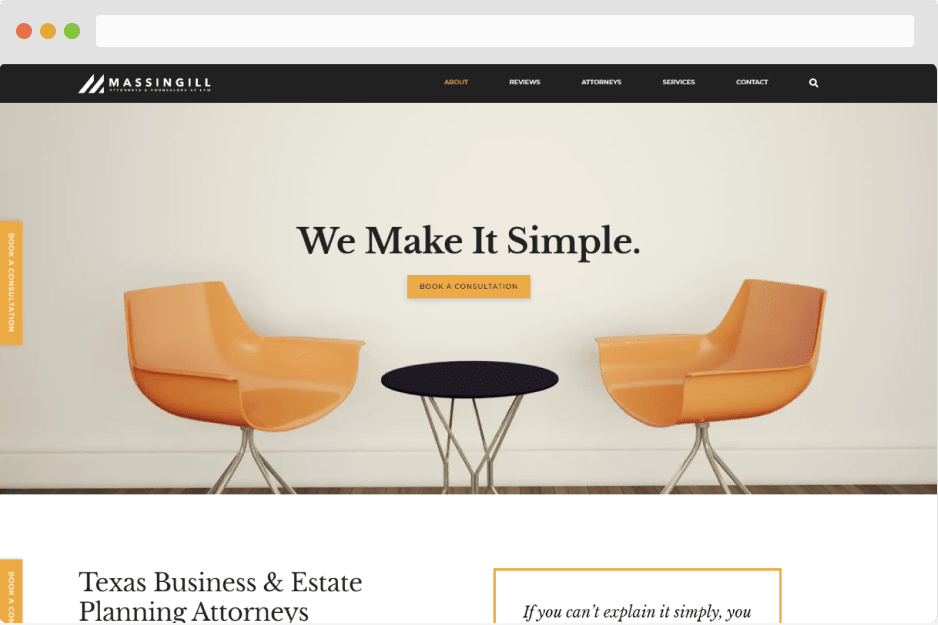

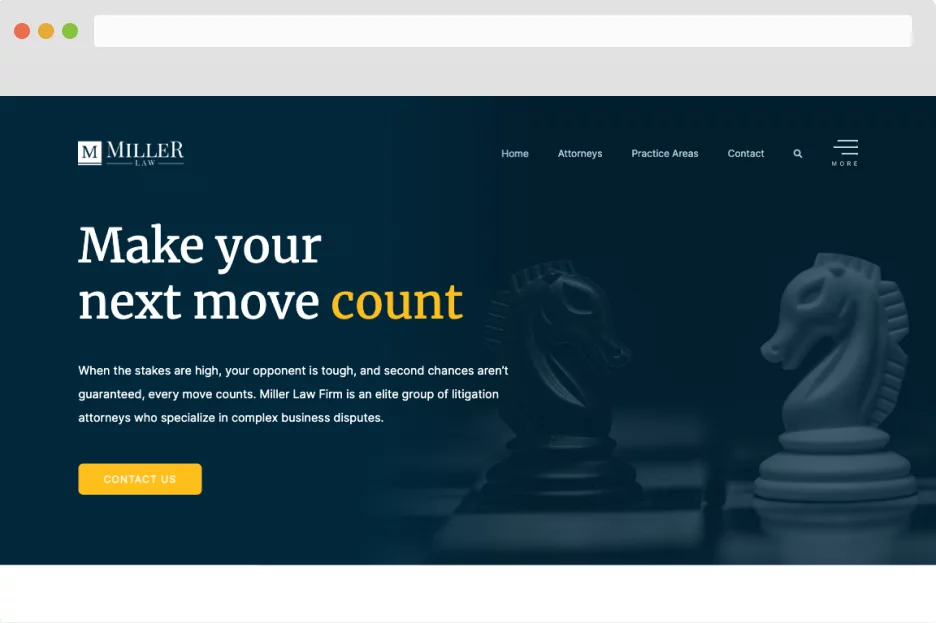

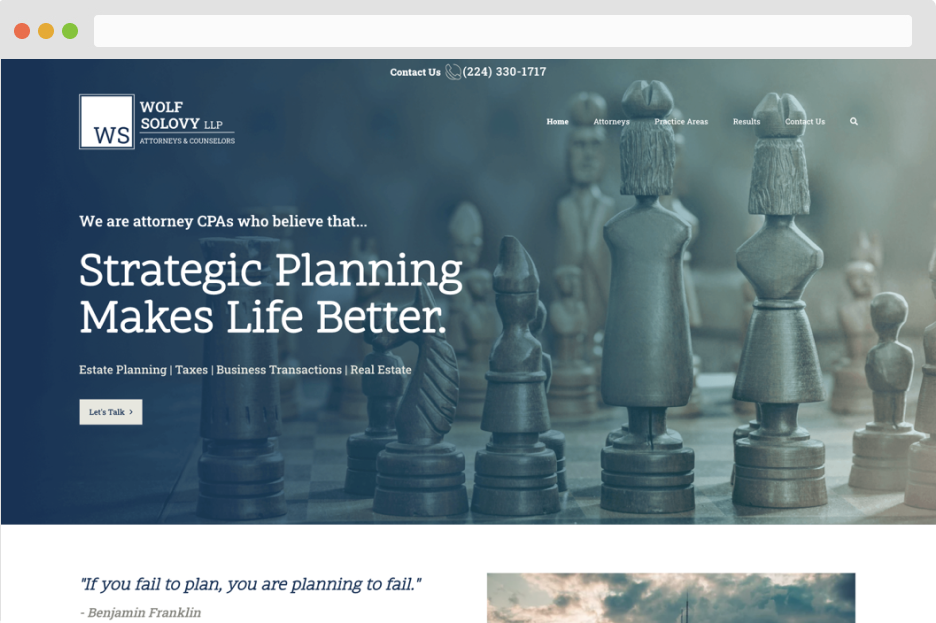

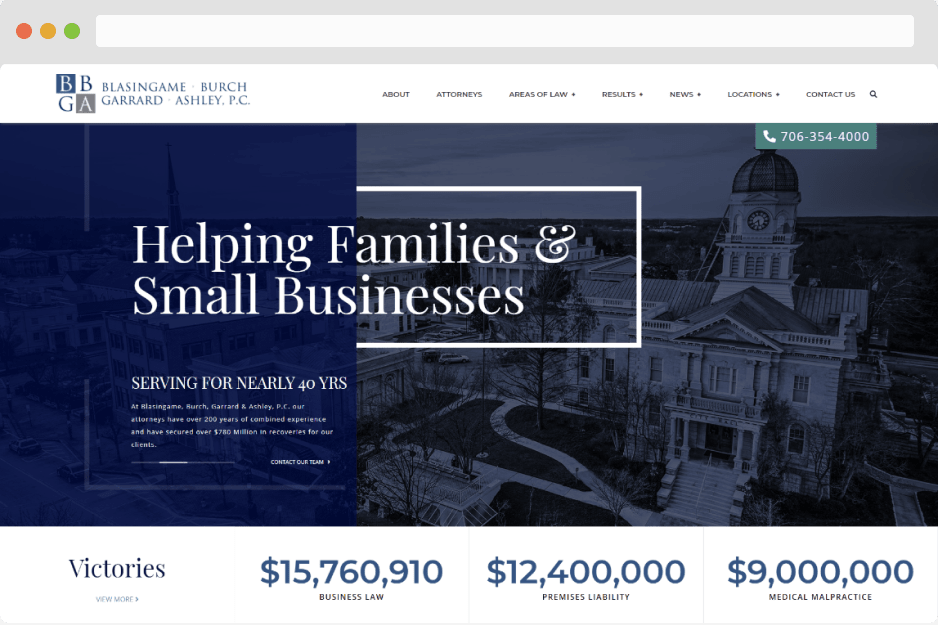

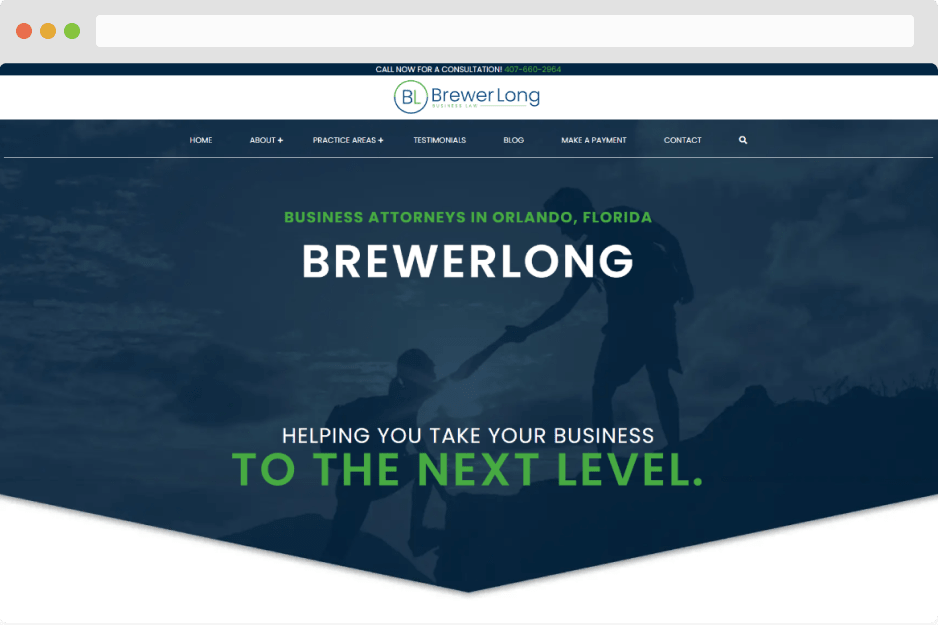

- Business Law

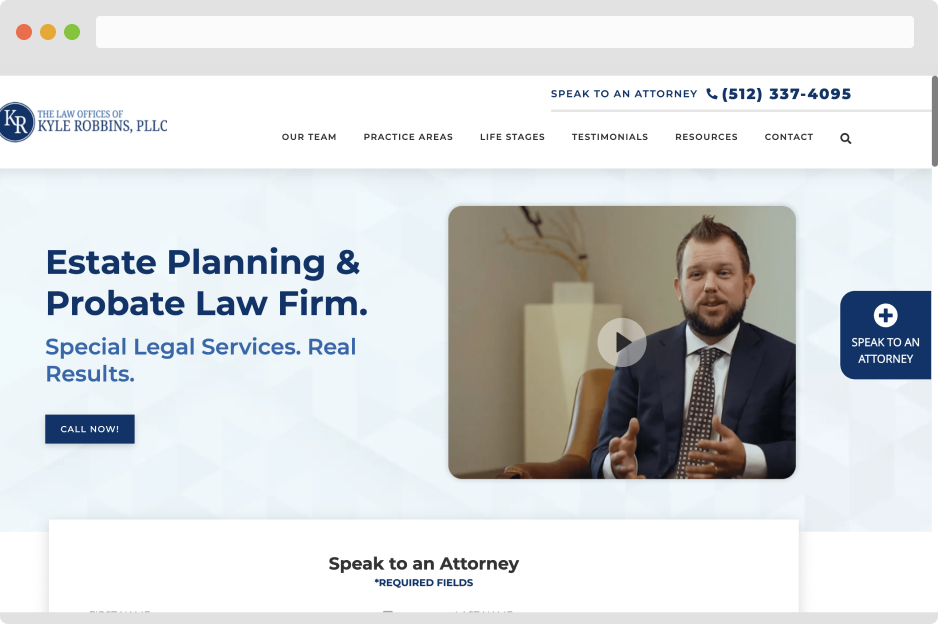

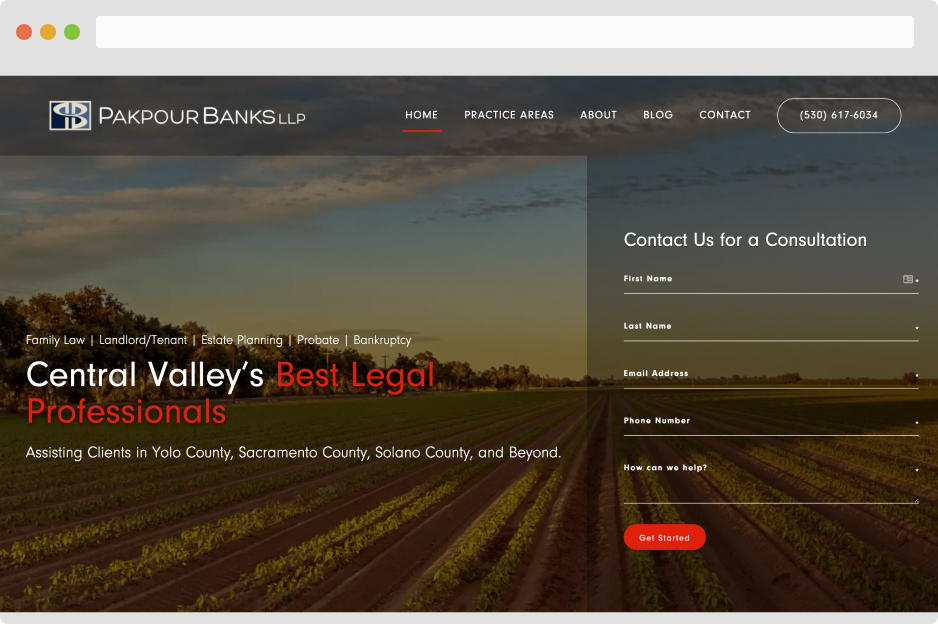

- Estate Planning

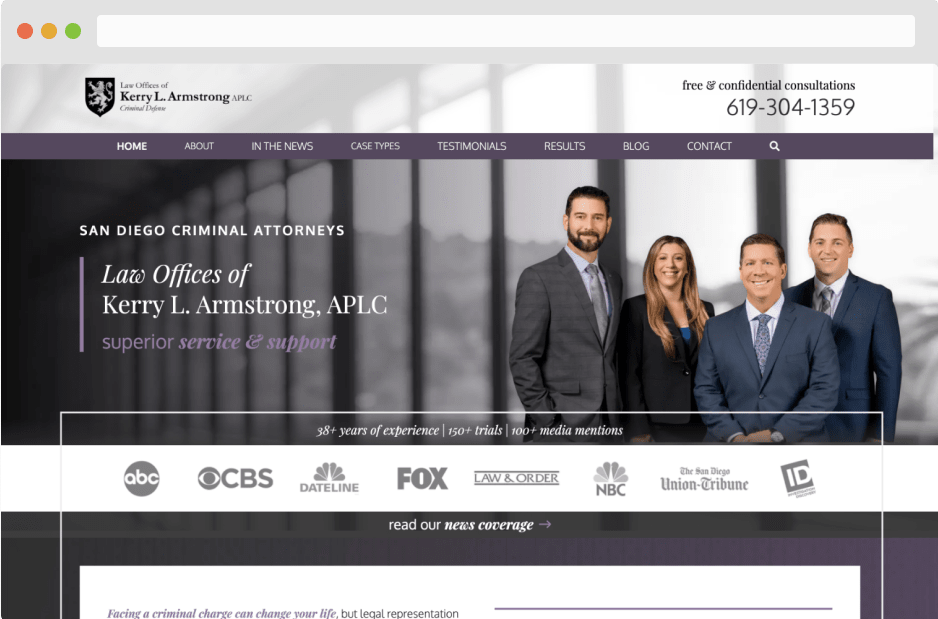

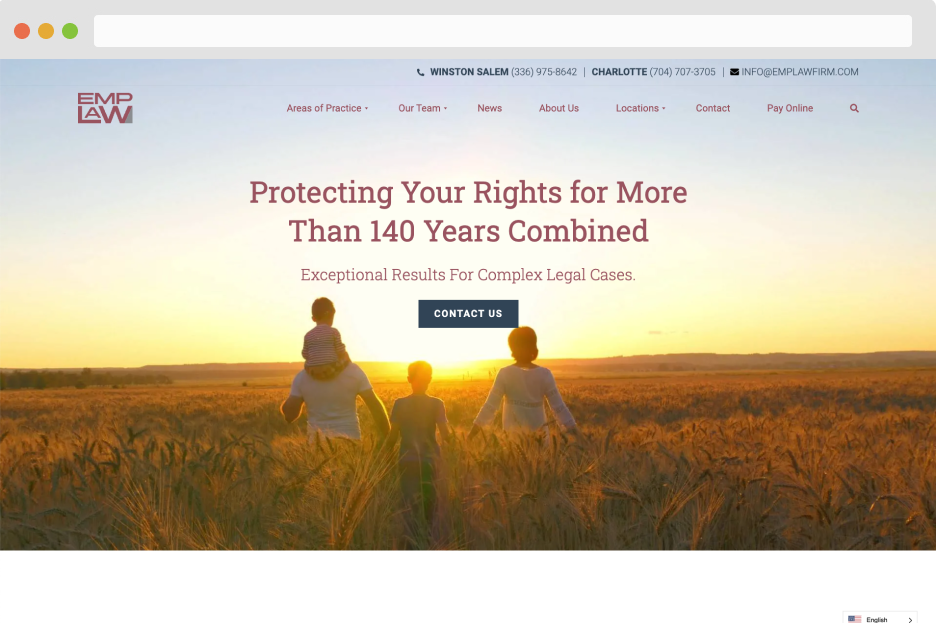

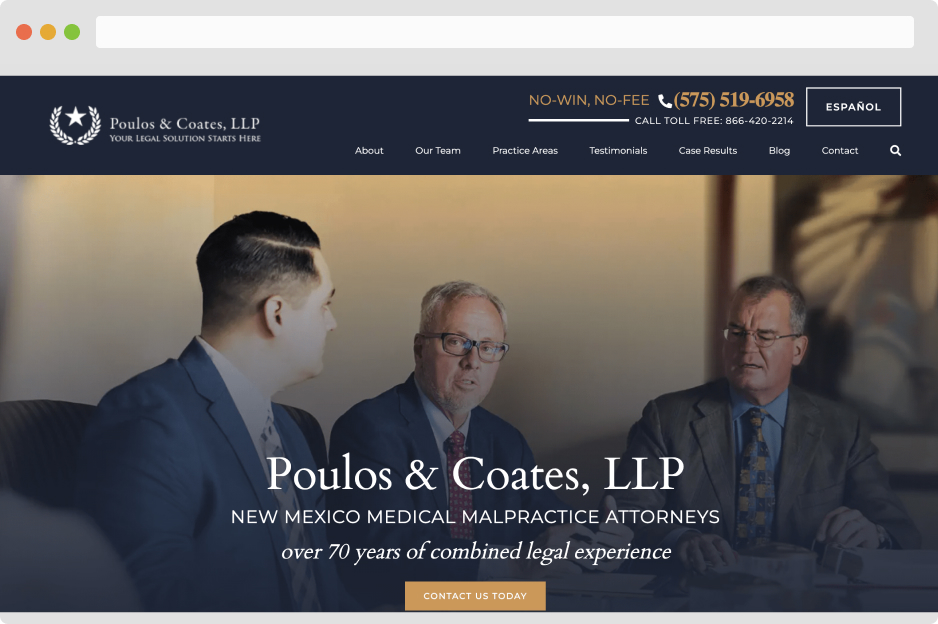

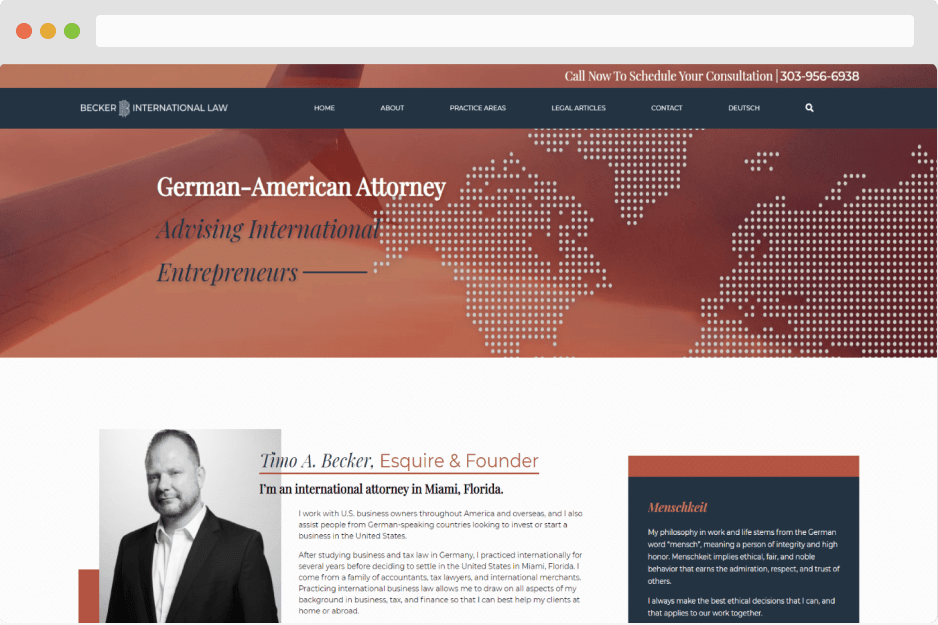

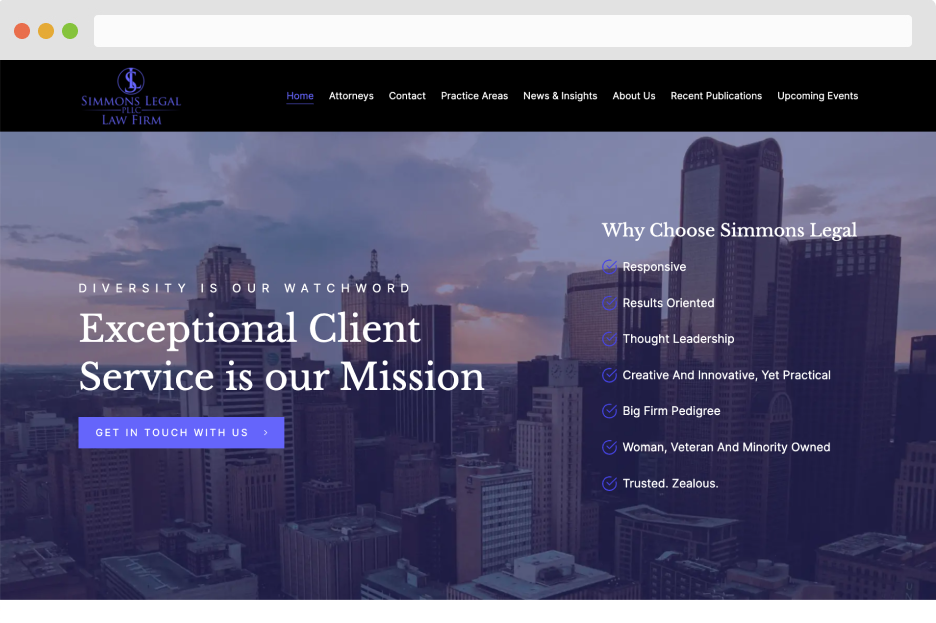

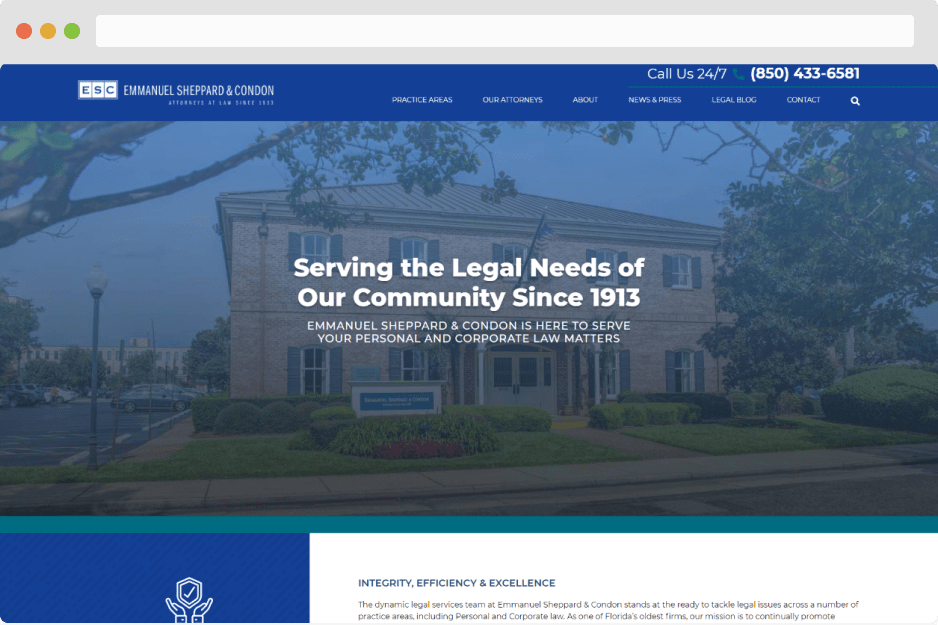

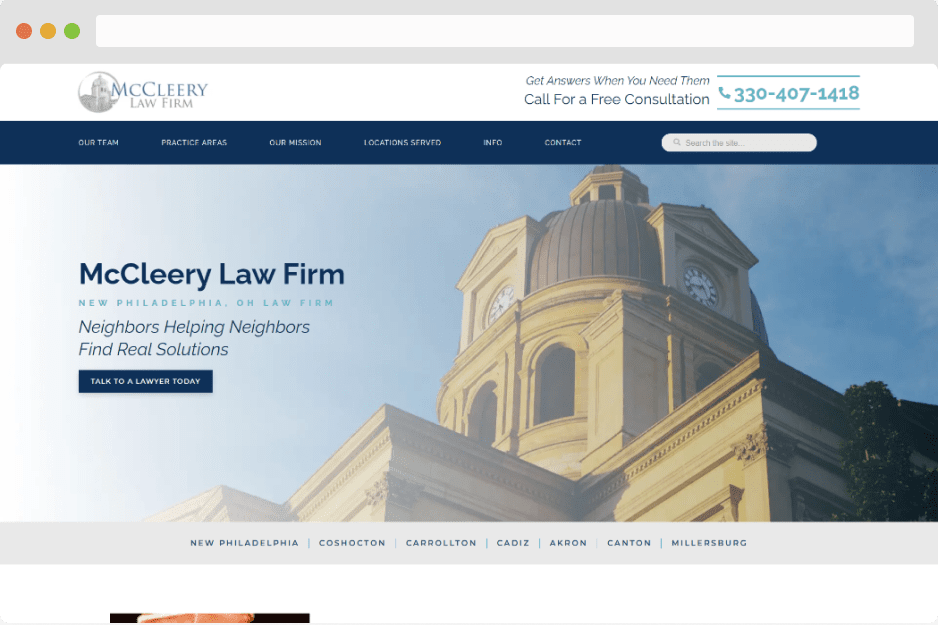

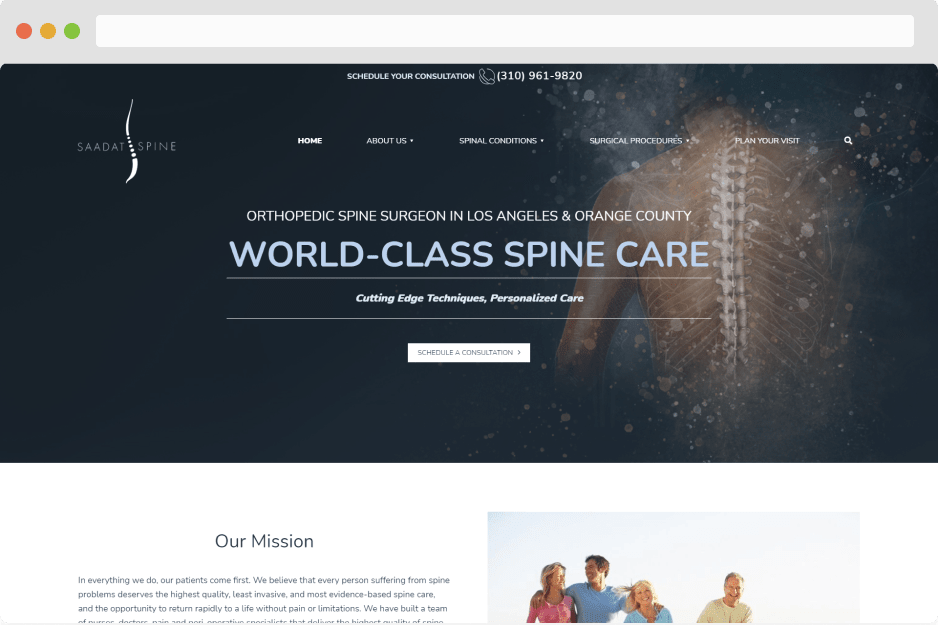

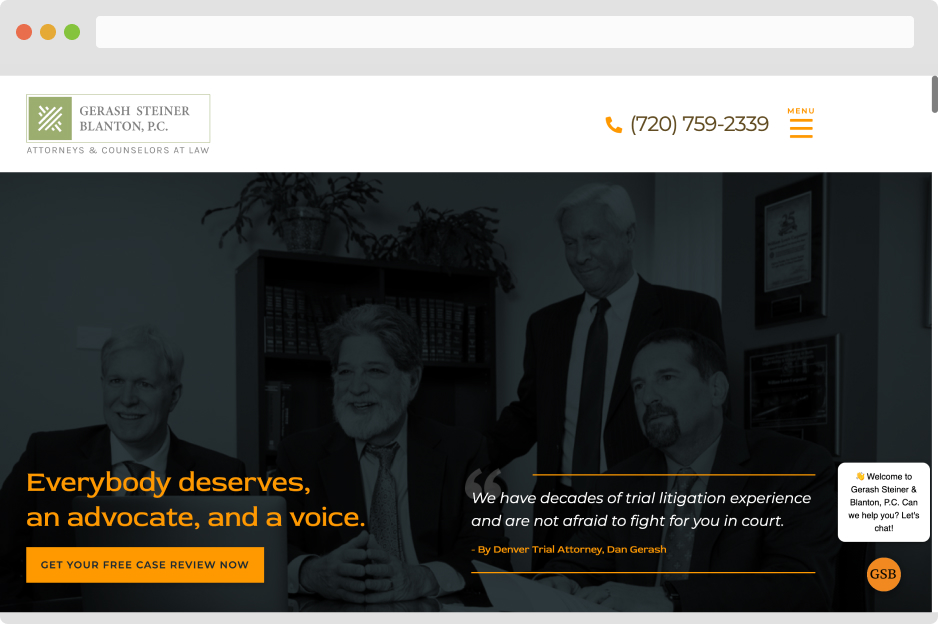

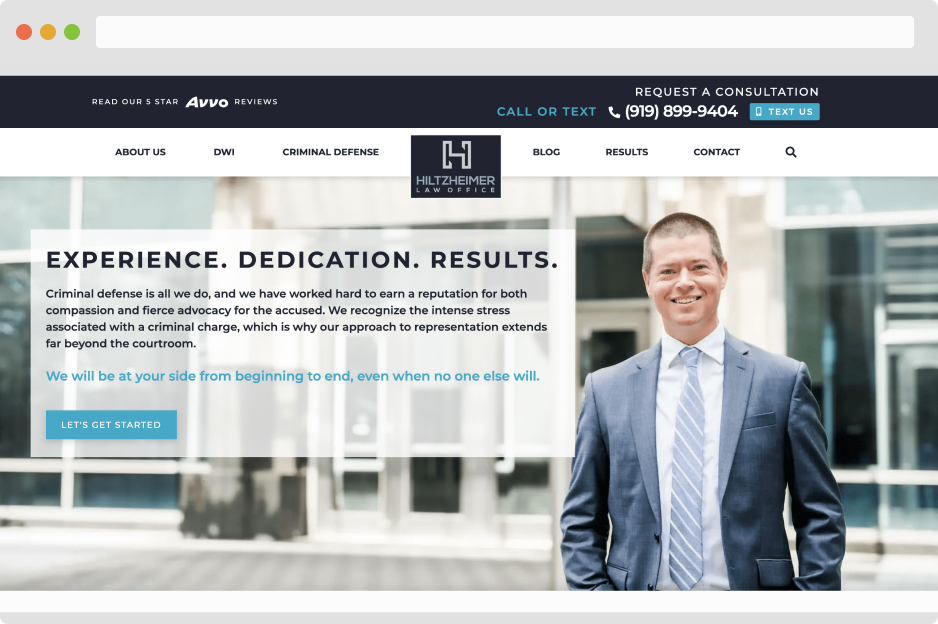

- Fully Custom Sites

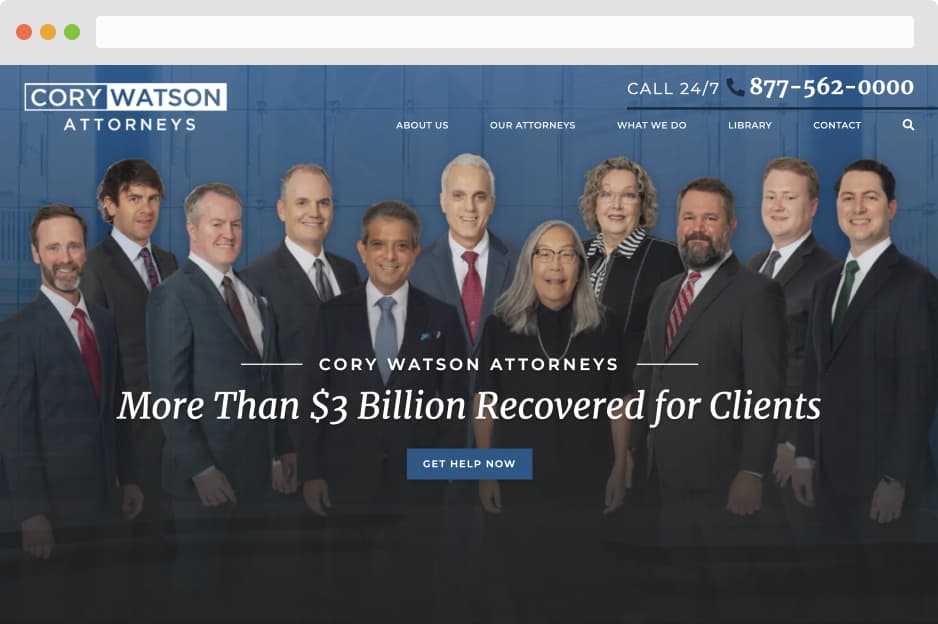

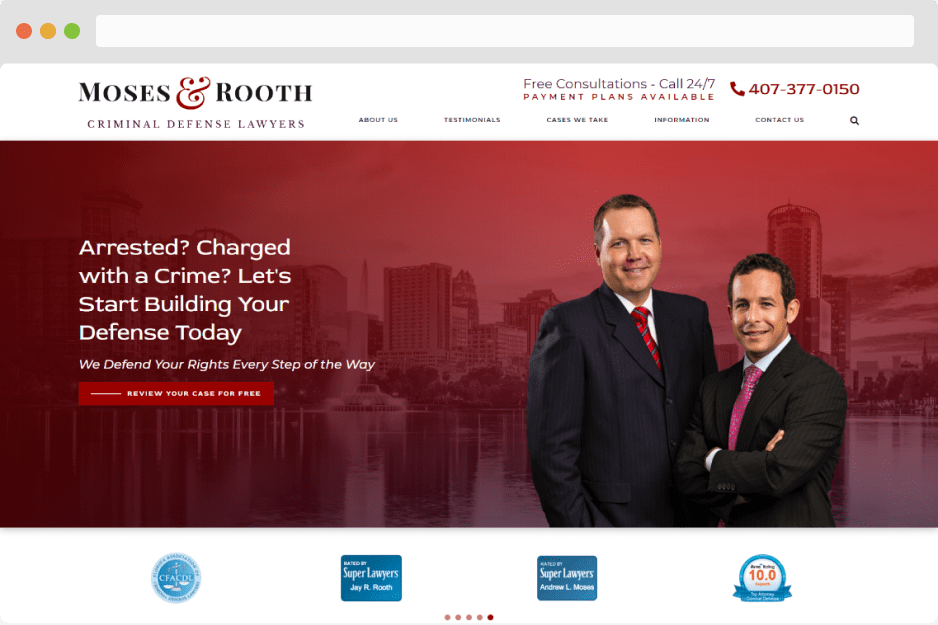

- Larger Law Firms

- Large Market Firms

- Family Law

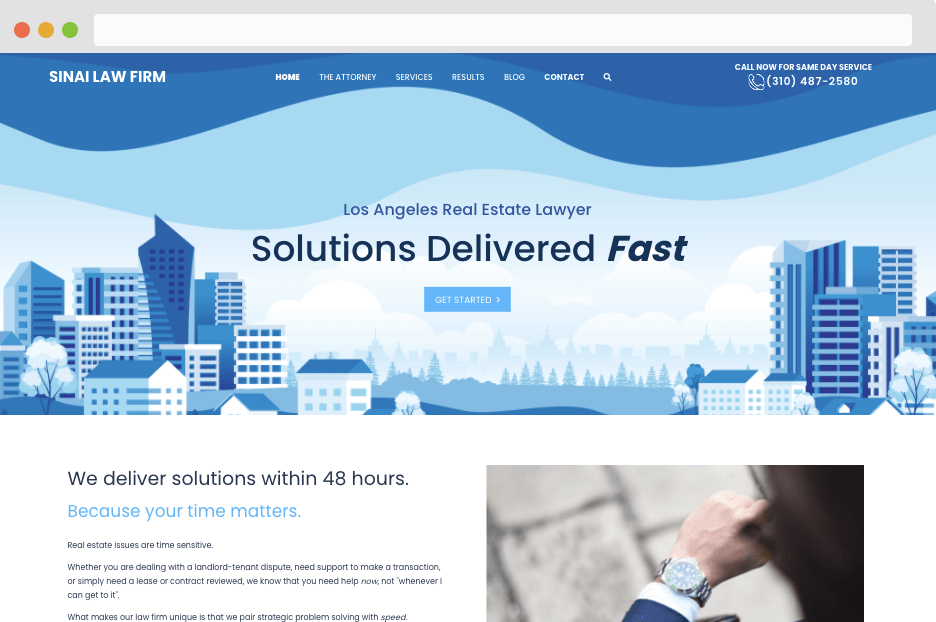

- Real Estate Law

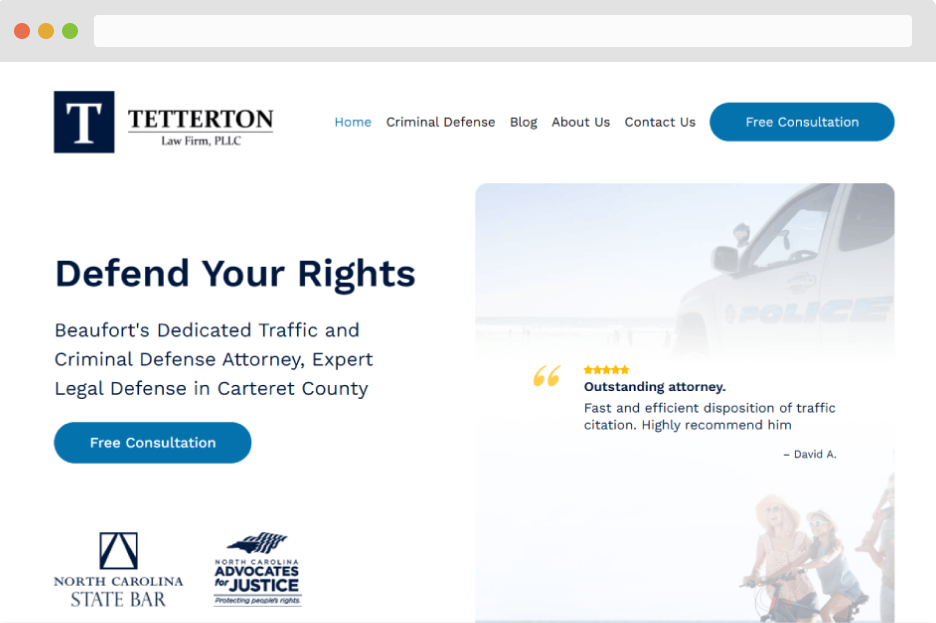

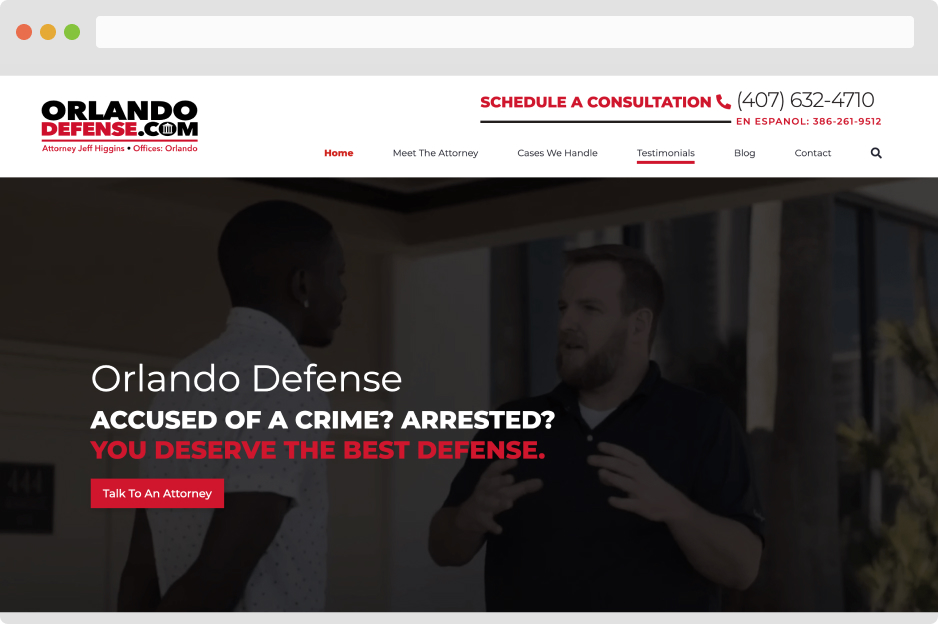

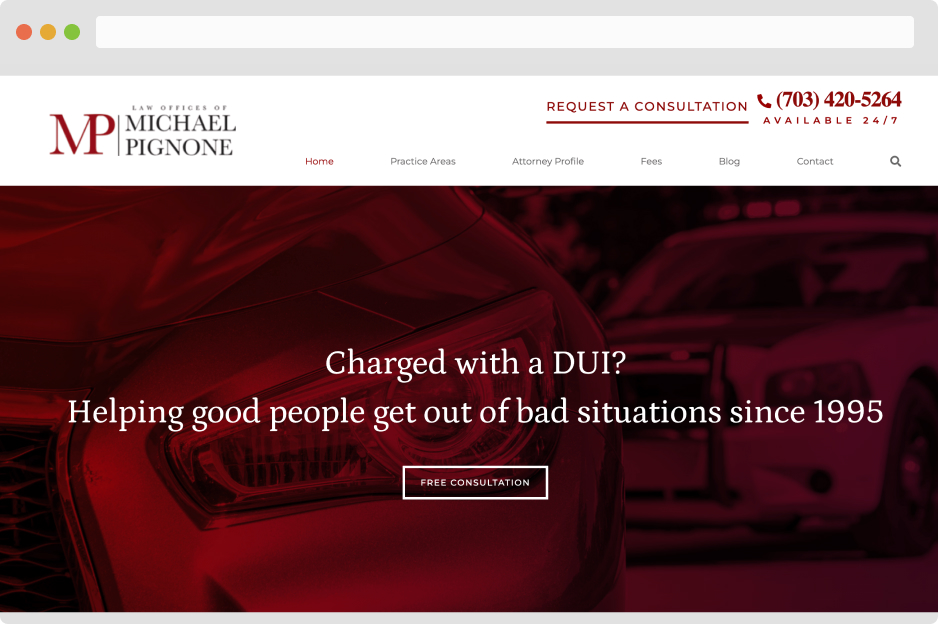

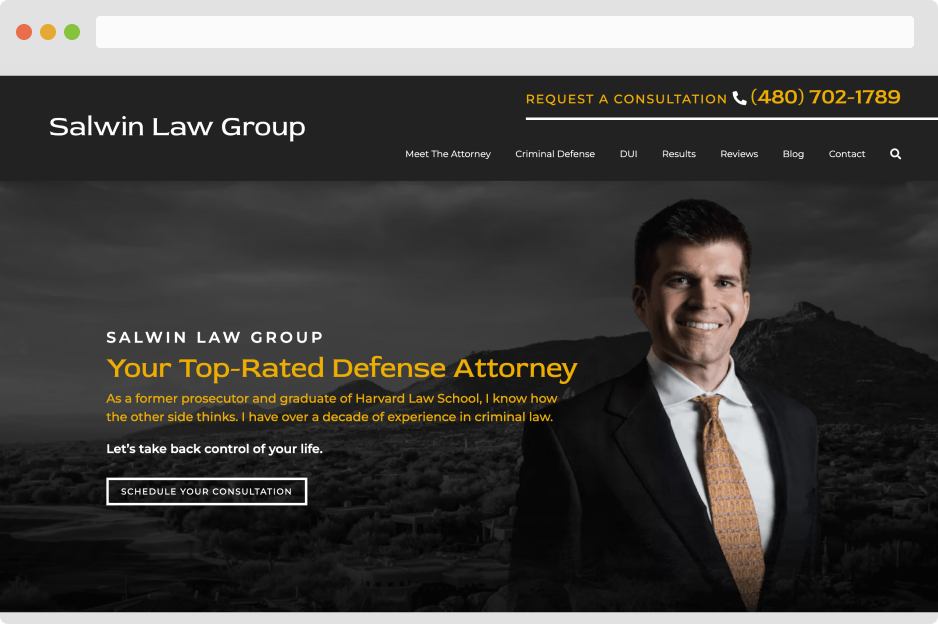

- Criminal Defense

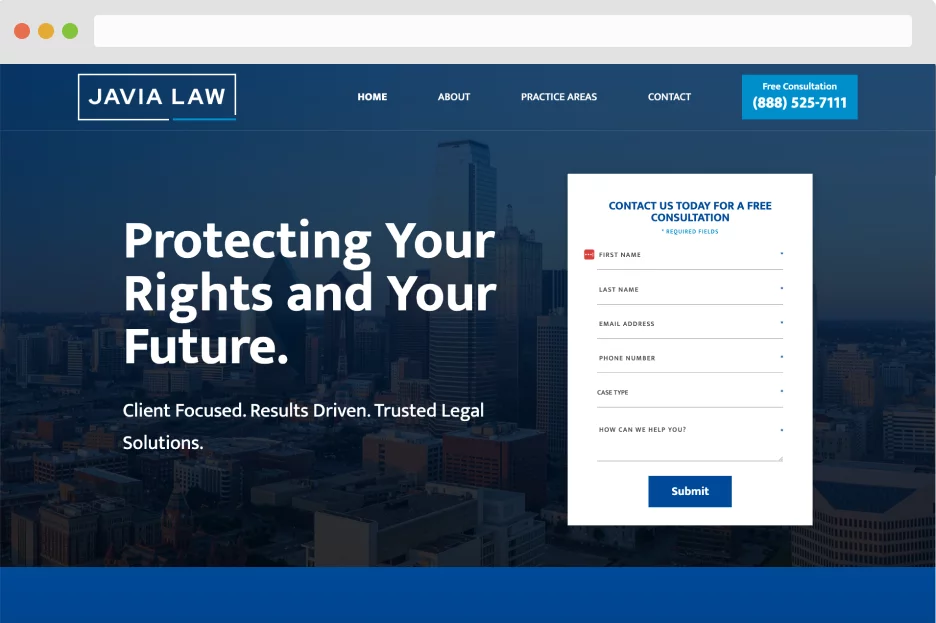

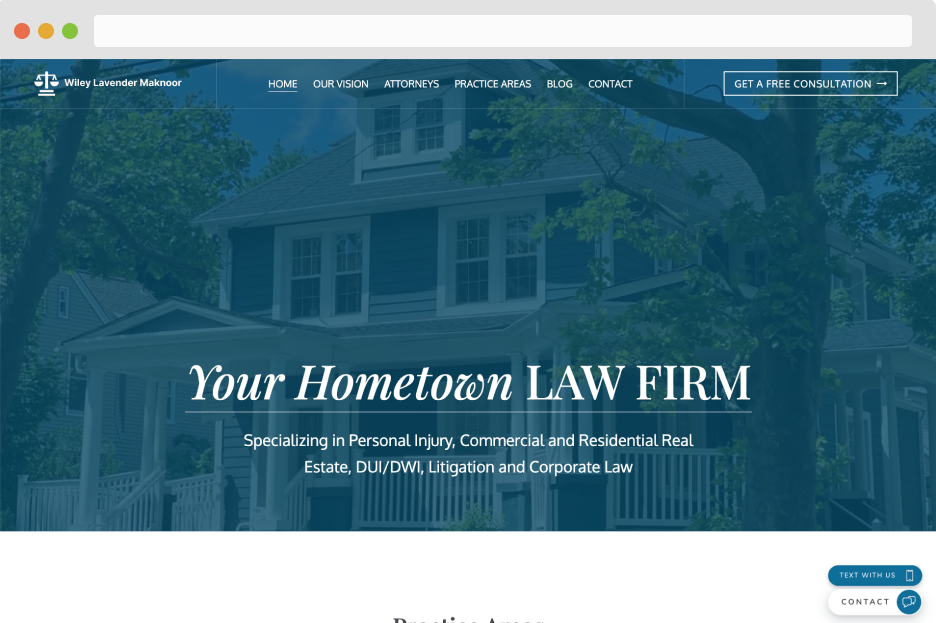

- Smaller Law Firms

- Custom Homepage Sites

- Small Market Firms

- 237% Organic Traffic

- 11 Qualified Leads Per Month

- Designed with StoryBrand